This is a blog post of hugging face a smol course summary.

Hugging Face A Smol Course

课程链接:https://huggingface.co/learn/smol-course/

0. Welcome to the Fine-Tuning Course

Welcome to the comprehensive (and smollest) course to Fine-Tuning Language Models!

This free course will take you on a journey, from beginner to expert, in understanding, implementing, and optimizing fine-tuning techniques for large language models.

This course is smol but fast! It’s for software developers and engineers looking to fast track their LLM fine-tuning skills.

What to expect from this course?

In this course, you will:

- 📖 Study instruction tuning, supervised fine-tuning, preference alignment, evaluation, vision language models… and more!

- 🧑💻 Learn to use established fine-tuning frameworks and tools like TRL and Transformers.

- 💾 Share your projects and explore fine-tuning applications created by the community.

- 🏆 Participate in challenges where you will evaluate your fine-tuned models against other students.

- 🎓 Earn a certificate of completion by completing assignments.

At the end of this course, you’ll understand how to fine-tune language models effectively and build specialized AI applications using the latest fine-tuning techniques.

Don’t forget to sign up to the course!

What does the course look like?

The course is composed of:

- Foundational Units: where you learn fine-tuning concepts in theory.

- Hands-on: where you’ll learn to use established fine-tuning frameworks to adapt your models. These hands-on sections will have pre-configured environments.

- Use case assignments: where you’ll apply the concepts you’ve learned to solve a real-world problem that you’ll choose.

- Collaborations: We’re collaborating with Hugging Face’s partners to give you the latest fine-tuning implementations and tools.

This course is a living project, evolving with your feedback and contributions! Feel free to open issues and PRs in GitHub, and engage in discussions in our Discord server.

What’s the syllabus?

Here is the general syllabus for the course. A more detailed list of topics will be released with each unit.

Each chapter in this course is designed to be completed in 1 week, with approximately 3-4 hours of work per week.

| # | Topic | Description |

|---|

| 1 | Instruction Tuning | Supervised fine-tuning, chat templates, instruction following |

| 2 | Evaluation | Benchmarks and custom domain evaluation |

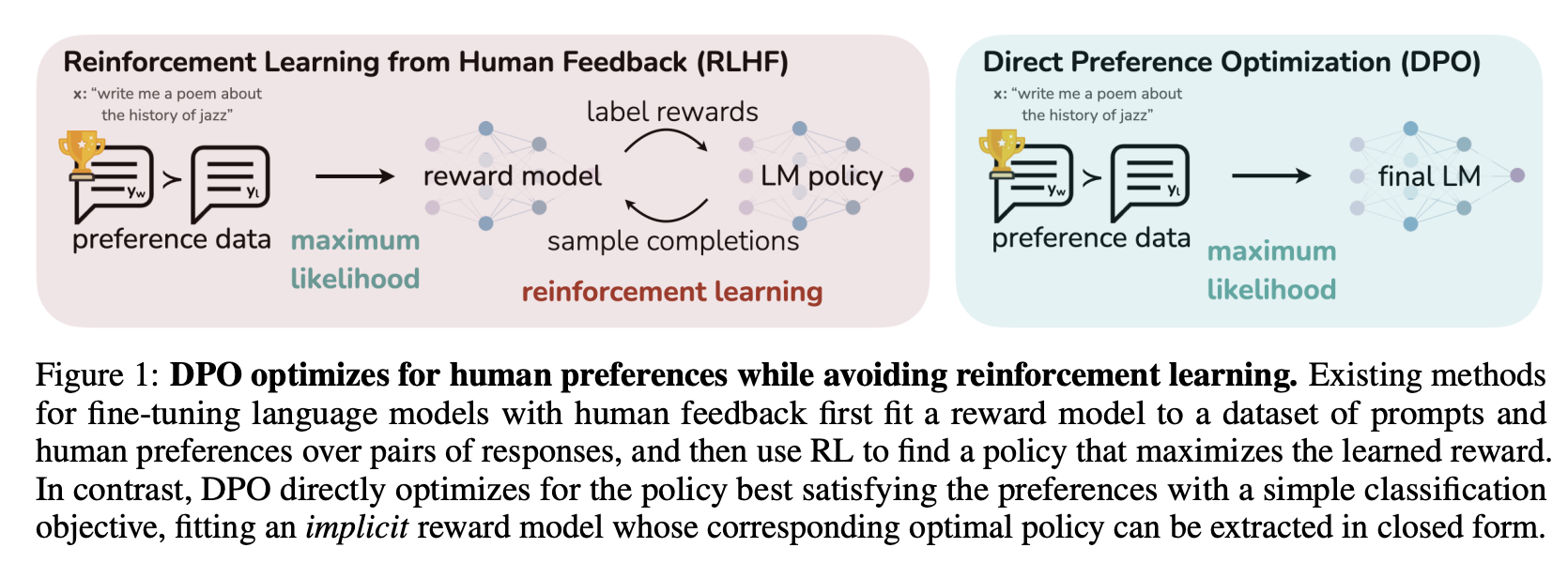

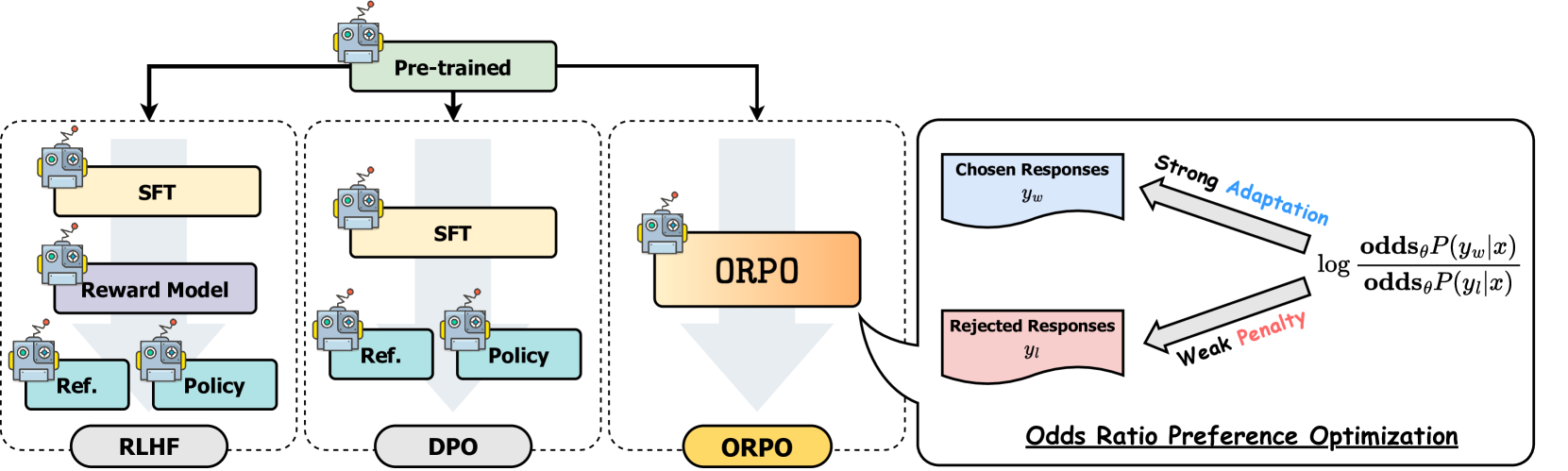

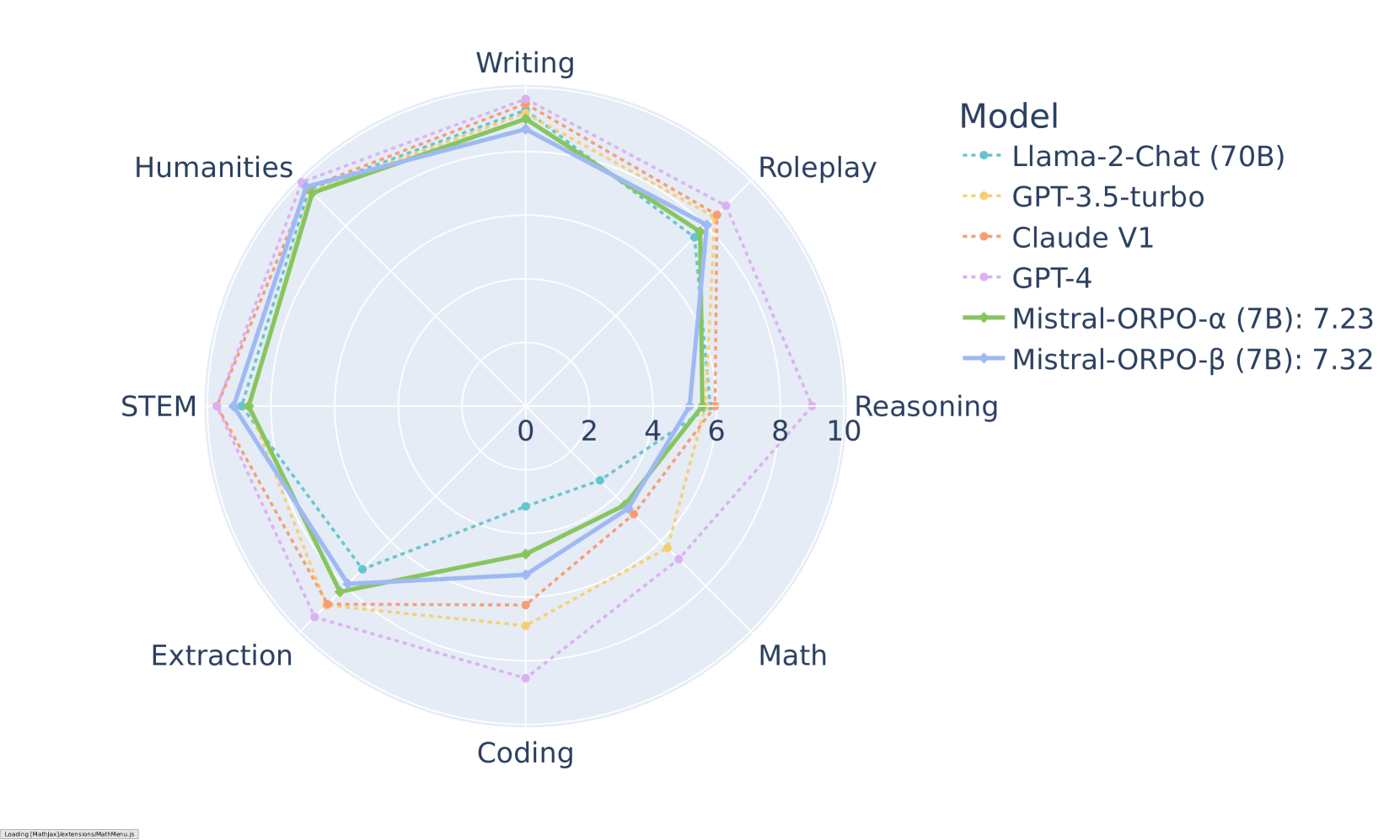

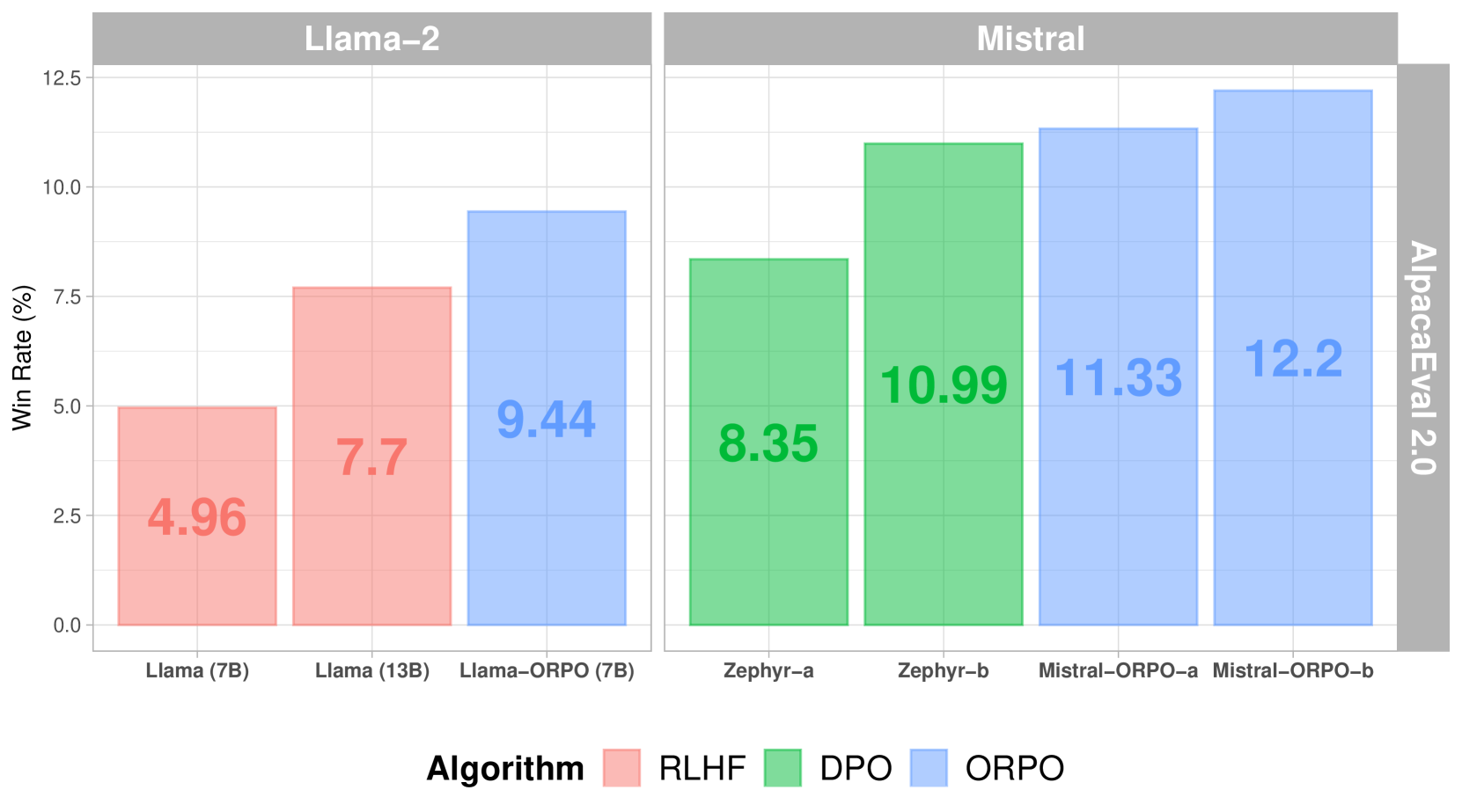

| 3 | Preference Alignment | Aligning models to human preferences with algorithms like DPO. |

| 4 | Vision Language Models | Adapt and use multimodal models |

| 5 | Reinforcement Learning | Optimizing models with based on reinforcement policies. |

| 6 | Synthetic Data | Generate synthetic datasets for custom domains |

| 7 | Award Ceremony | Showcase projects and celebrate |

What are the prerequisites?

To be able to follow this course, you should have:

- Basic understanding of AI and LLM concepts

- Familiarity with Python programming and machine learning fundamentals

- Experience with PyTorch or similar deep learning frameworks

- Understanding of transformers architecture basics

[!TIP] If you don’t have any of these, don’t worry. Check out the LLM Course to get started. The above courses are not prerequisites in themselves, so if you understand the concepts of LLMs and transformers, you can start the course now!

Acknowledgments

We would like to extend our gratitude to the following individuals and partners for their invaluable contributions and support:

1. Instruction Tuning

Introduction to Instruction Tuning with SmolLM3

Welcome to the smollest course of fine-tuning! This module will guide you through instruction tuning using SmolLM3, Hugging Face’s latest 3B parameter model that achieves state-of-the-art performance for its size, while remaining accessible for learning and experimentation.

[!TIP] By the end of this course you will be fine tuning an LLM with SFT. This course is smol but fast! If you’re like for a smoother gradient, check out the The LLM Course.

After completing this unit (and the assignment), don’t forget to test your knowledge with the quiz!

What is Instruction Tuning?

Instruction tuning is the process of adapting pre-trained language models to follow human instructions and engage in conversations. While base models like SmolLM3-3B-Base are trained to predict the next token, instruction-tuned models like SmolLM3-3B are specifically trained to:

- Follow user instructions accurately

- Engage in natural conversations

- Provide helpful, harmless, and honest responses

- Maintain context across multi-turn interactions

- Use tools or MCP servers to perform tasks

This transformation from a text completion model to an instruction-following assistant is achieved through supervised fine-tuning on carefully curated datasets.

[!TIP] We dive into the instruction tuning here in the LLM Course.

Why SmolLM3 for Learning?

SmolLM3 is perfect for learning instruction tuning because it:

- Fits in a single GPU at a reasonable cost

- Achieves competitive performance

- Supports multilingual conversations

- Supports extended context length up to 8k tokens (with some variants supporting longer contexts up to 128k tokens)

- Features dual-mode reasoning with explicit thinking capabilities

- Comes with complete training recipes so you understand exactly how it was built

Module Overview

In this comprehensive module, we will explore four key areas:

Chat Templates

Chat templates are the foundation of instruction tuning - they structure interactions between users and AI models, ensuring consistent and contextually appropriate responses. You’ll learn:

- How SmolLM3’s chat template works

- Converting conversations to the proper format

- Working with system prompts and multi-turn conversations

- Using the Transformers library’s built-in template support

For detailed information, see Chat Templates.

Supervised Fine-Tuning (SFT)

Supervised Fine-Tuning is the core technique for adapting pre-trained models to follow instructions. You’ll master:

- The theory behind SFT and when to use it

- Working with the SmolTalk2 dataset

- Using TRL’s

SFTTrainer for efficient training - Best practices for data preparation and training configuration

For a comprehensive guide, see Supervised Fine-Tuning.

Hands-on Exercises

Put your knowledge into practice with progressively challenging exercises:

- Process datasets for instruction tuning

- Fine-tune SmolLM3 on different tasks

- Use both Python APIs and CLI tools

- Compare base model vs fine-tuned model performance

Complete exercises and examples are in Exercises.

Hugging Face Jobs

Hugging Face Jobs is a fully managed cloud infrastructure for training models without the hassle of setting up GPUs, managing dependencies, or configuring environments locally. This is particularly valuable for SFT training, which can be resource-intensive and time-consuming.

For a comprehensive guide, see Hugging Face Jobs.

What You’ll Build

By the end of this module, you’ll have:

- Fine-tuned your own SmolLM3 model on a custom dataset

- Understanding of chat template formatting and conversation structure

- Experience with both programmatic and CLI-based training workflows

- A model deployed to Hugging Face Hub that others can use

- Foundation knowledge for more advanced fine-tuning techniques

Let’s dive into the fascinating world of instruction tuning!

References

Chat Templates

Chat templates are the foundation of instruction tuning - they provide a consistent format for structuring interactions between language models, users, and external tools. Think of them as the “grammar” that teaches models how to understand conversations, distinguish between different speakers, and respond appropriately.

Base Models vs Instruct Models

First, we need to understand the difference between base and instruct models. This is crucial for effective fine-tuning.

Base Model (SmolLM3-3B-Base): Trained on raw text to predict the next token. If you give it “The weather today is”, it might continue with “sunny and warm” or any plausible continuation.

Instruct Model (SmolLM3-3B): Fine-tuned to follow instructions and engage in conversations. If you ask “What’s the weather like?”, it understands this as a question requiring a response as a new message.

The journey from base to instruct model involves:

- Chat template: A structured format for interactions between language models, users, and external tools.

- Supervised fine-tuning: The technique used to train the model to generate appropriate responses.

SmolLM3 uses the ChatML (Chat Markup Language) format, which has become a standard in the industry due to its clarity and flexibility.

[!TIP] Markup 是一种通用术语,指通过在文本中插入标签(tags)来定义结构、样式或语义的系统。典型代表:HTML、XML;Markdown 是一种 轻量级 Markup 语言。

In the next chapter, we will go in to preference alignment. This is a technique that allows you to fine-tune a model to generate responses that are preferred by a human.

Pipeline Usage: Automated Chat Processing

The easiest way to use an open source large language model is to use the pipeline abstraction in 🤗 Transformers. It handles chat templates seamlessly, making it easy to use chat models without manual template management. So much so, you won’t even need to know the chat template format.

from transformers import pipeline

### Initialize the pipeline

pipe = pipeline("text-generation", "HuggingFaceTB/SmolLM3-3B", device_map="auto")

### Define your conversation

messages = [

{"role": "system", "content": "You are a friendly chatbot who always responds in the style of a pirate"},

{"role": "user", "content": "How many helicopters can a human eat in one sitting?"},

]

### Generate response - pipeline handles chat templates automatically

response = pipe(messages, max_new_tokens=128, temperature=0.7)

print(response[0]['generated_text'][-1]) ### Print the assistant's response

Output:

{

'role': 'assistant',

'content': "Matey, I'm afraid I must inform ye that humans cannot eat helicopters. Helicopters are not food, they are flying machines. Food is meant to be eaten, like a hearty plate o' grog, a savory bowl o' stew, or a delicious loaf o' bread. But helicopters, they be for transportin' and movin' around, not for eatin'. So, I'd say none, me hearties. None at all."

}

In this example, the pipeline automatically:

- Applies the correct chat template for the model based on the model’s tokenizer configuration on the Hugging Face Hub repo.

- Handles tokenization and generation automatically based on the model’s tokenizer configuration.

- Returns structured output with role information

- Manages generation parameters and stopping criteria

Advanced Pipeline Usage

We can take fine-grained control of the generation process by passing in a generation_config dictionary to the pipeline abstraction.

### Configure generation parameters

generation_config = {

"max_new_tokens": 200,

"temperature": 0.8,

"do_sample": True,

"top_p": 0.9,

"repetition_penalty": 1.1

}

### Multi-turn conversation

conversation = [

{"role": "system", "content": "You are a helpful math tutor."},

{"role": "user", "content": "Can you help me with calculus?"},

]

### Generate first response

response = pipe(conversation, **generation_config)

conversation = response[0]['generated_text']

### Continue the conversation

conversation.append({"role": "user", "content": "What is a derivative?"})

response = pipe(conversation, **generation_config)

print("Final conversation:")

for message in response[0]['generated_text']:

print(f"{message['role']}: {message['content']}")

Understanding SmolLM3’s Chat Template

Now that we understand basic inference with a chat model, let’s dive into the chat template format. SmolLM3 uses a common chat template that handles multiple conversation types. Let’s examine how it works:

If you want to explore chat templates hand-on, you can try out the chat template playground:

SmolLM3 uses the ChatML format with special tokens that clearly delineate different parts of the conversation. You can this format in https://huggingface.co/HuggingFaceTB/SmolLM3-3B/blob/main/chat_template.jinja. For example, the system message is marked with <|im_start|>system and <|im_end|>.

<|im_start|>system

You are a helpful assistant focused on technical topics.<|im_end|>

<|im_start|>user

Hi there!<|im_end|>

<|im_start|>assistant

Nice to meet you!<|im_end|>

<|im_start|>user

Can I ask a question?<|im_end|>

<|im_start|>assistant

Key Components:

<|im_start|> and <|im_end|>: Special tokens that mark the beginning and end of each message- Roles:

system, user, assistant (and tool for function calling) - Content: The actual message text between the role declaration and

<|im_end|>

Dual-Mode Reasoning Support

SmolLM3’s is a new category of models that can reason, or not. It enables this feature through special formatting and a parameter. If the parameter is set to think, the model will show its reasoning process. This is communicated to the model through the thinking token.

Standard Mode (no_think):

<|im_start|>user

What is 15 × 24?<|im_end|>

<|im_start|>assistant

15 × 24 = 360<|im_end|>

Thinking Mode (think):

<|im_start|>user

What is 15 × 24?<|im_end|>

<|im_start|>assistant

<|thinking|>

I need to multiply 15 by 24. Let me break this down:

15 × 24 = 15 × (20 + 4) = (15 × 20) + (15 × 4) = 300 + 60 = 360

</|thinking|>

15 × 24 = 360<|im_end|>

This dual-mode capability allows SmolLM3 to show its reasoning process when needed, making it perfect for combining complex and simple tasks.

Working with SmolLM3 Chat Templates in Code

The transformers library automatically handles chat template formatting through the tokenizer. This means you only need to structure your messages correctly, and the library takes care of the special token formatting. Here’s how to work with SmolLM3’s chat template:

from transformers import AutoTokenizer

### Load SmolLM3's tokenizer

tokenizer = AutoTokenizer.from_pretrained("HuggingFaceTB/SmolLM3-3B")

### Structure your conversation as a list of message dictionaries

messages = [

{"role": "system", "content": "You are a helpful assistant focused on technical topics."},

{"role": "user", "content": "Can you explain what a chat template is?"},

{"role": "assistant", "content": "A chat template structures conversations between users and AI models by providing a consistent format that helps the model understand different roles and maintain context."}

]

### Apply the chat template

formatted_chat = tokenizer.apply_chat_template(

messages,

tokenize=False, ### Return string instead of tokens

add_generation_prompt=True ### Add prompt for next assistant response

)

print(formatted_chat)

Output:

<|im_start|>system

You are a helpful assistant focused on technical topics.<|im_end|>

<|im_start|>user

Can you explain what a chat template is?<|im_end|>

<|im_start|>assistant

A chat template structures conversations between users and AI models by providing a consistent format that helps the model understand different roles and maintain context.<|im_end|>

<|im_start|>assistant

Understanding the Message Structure

Each message in the conversation follows a simple dictionary format:

role: Identifies who is speaking (system, user, assistant, or tool).content: The actual message content.

Message Types:

- System Messages: Set behavior and context for the entire conversation

- User Messages: Questions, requests, or statements from the human user

- Assistant Messages: Responses from the AI model

- Tool Messages: Results from function calls (for advanced use cases)

System Messages: Setting the Context

System messages are crucial for controlling SmolLM3’s behavior. They act as persistent instructions that influence all subsequent interactions. To create a system message, you can use the system role and the content key:

### Professional assistant

system_message = {

"role": "system",

"content": "You are a professional customer service agent. Always be polite, clear, and helpful."

}

### Technical expert

system_message = {

"role": "system",

"content": "You are a senior software engineer. Provide detailed technical explanations with code examples when appropriate."

}

### Creative assistant

system_message = {

"role": "system",

"content": "You are a creative writing assistant. Help users craft engaging stories and provide constructive feedback."

}

[!TIP] System messages have a significant impact on the model’s behavior. They are the first message in the conversation and they set the tone for the entire conversation. They should be specific, set boundaries, provide context, and use examples.

Multi-Turn Conversations

SmolLM3 can maintain context across multiple conversation turns. Each message builds upon the previous context. For example, the following code creates a conversation with a helpful programming tutor:

conversation = [

{"role": "system", "content": "You are a helpful programming tutor."},

{"role": "user", "content": "I'm learning Python. Can you explain functions?"},

{"role": "assistant", "content": "Functions in Python are reusable blocks of code that perform specific tasks. They're defined using the 'def' keyword."},

{"role": "user", "content": "Can you show me an example?"},

{"role": "assistant", "content": "Sure! Here's a simple function:\n\n```python\ndef greet(name):\n return f'Hello, {name}!'\n\nresult = greet('Alice')\nprint(result) ### Output: Hello, Alice!\n```"},

{"role": "user", "content": "How do I make it return multiple values?"},

]

Generation Prompts: Controlling Model Behavior

One of the most important concepts in chat templates is the generation prompt. This tells the model when it should start generating a response versus continuing existing text.

Understanding add_generation_prompt

The add_generation_prompt parameter controls whether the template adds tokens that indicate the start of a bot response:

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("HuggingFaceTB/SmolLM3-3B")

messages = [

{"role": "user", "content": "Hi there!"},

{"role": "assistant", "content": "Nice to meet you!"},

{"role": "user", "content": "Can I ask a question?"}

]

### Without generation prompt - for completed conversations

formatted_without = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=False

)

print("Without generation prompt:")

print(formatted_without)

print("\n" + "="*50 + "\n")

### With generation prompt - for inference

formatted_with = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

print("With generation prompt:")

print(formatted_with)

Output:

Without generation prompt:

<|im_start|>user

Hi there!<|im_end|>

<|im_start|>assistant

Nice to meet you!<|im_end|>

<|im_start|>user

Can I ask a question?<|im_end|>

==================================================

With generation prompt:

<|im_start|>user

Hi there!<|im_end|>

<|im_start|>assistant

Nice to meet you!<|im_end|>

<|im_start|>user

Can I ask a question?<|im_end|>

<|im_start|>assistant

The generation prompt ensures that when the model generates text, it will write a bot response instead of doing something unexpected like continuing the user’s message.

When to Use Generation Prompts

- For inference: Use

add_generation_prompt=True when you want the model to generate a response. - For training: Use

add_generation_prompt=False when preparing training data with complete conversations. - For evaluation: Use

add_generation_prompt=True to test model responses.

Continuing Final Messages: Advanced Response Control

The continue_final_message parameter allows you to make the model continue the last message in a conversation instead of starting a new one. This is particularly useful for “prefilling” responses or ensuring specific output formats.

Basic Example

### Prefill a JSON response

chat = [

{"role": "user", "content": "Can you format the answer in JSON?"},

{"role": "assistant", "content": '{"name": "'},

]

### Continue the final message

formatted_chat = tokenizer.apply_chat_template(

chat,

tokenize=False,

continue_final_message=True

)

print("Continuing final message:")

print(formatted_chat)

print("\n" + "="*50 + "\n")

### Compare with starting a new message

formatted_new = tokenizer.apply_chat_template(

chat,

tokenize=False,

add_generation_prompt=True

)

print("Starting new message:")

print(formatted_new)

Output:

Continuing final message:

<|im_start|>user

Can you format the answer in JSON?<|im_end|>

<|im_start|>assistant

{"name": "

==================================================

Starting new message:

<|im_start|>user

Can you format the answer in JSON?<|im_end|>

<|im_start|>assistant

{"name": "<|im_end|>

<|im_start|>assistant

Practical Applications

1. Structured Output Generation:

### Force the model to complete a specific format

messages = [

{"role": "system", "content": "You are a helpful assistant that always responds in JSON format."},

{"role": "user", "content": "What's the capital of France?"},

{"role": "assistant", "content": '{\n "question": "What\'s the capital of France?",\n "answer": "'}

]

### The model will continue with just the answer, maintaining JSON structure

2. Code Completion:

### Guide the model to complete code

messages = [

{"role": "user", "content": "Write a Python function to calculate factorial"},

{"role": "assistant", "content": "def factorial(n):\n if n == 0:\n return 1\n else:\n return n * "}

]

### Model will complete the recursive call

3. Step-by-Step Reasoning:

### Guide the model through structured thinking

messages = [

{"role": "user", "content": "Solve: 2x + 5 = 13"},

{"role": "assistant", "content": "Let me solve this step by step:\n\nStep 1: "}

]

### Model will continue with the first step

Important Notes

- You cannot use

add_generation_prompt=True and continue_final_message=True together - The final message must have the “assistant” role when using

continue_final_message=True - This feature removes end-of-sequence tokens from the final message

Working with Reasoning Mode

SmolLM3’s dual-mode reasoning can be controlled through special formatting:

Standard vs Thinking Mode

### Standard mode - direct answer

standard_messages = [

{"role": "user", "content": "What is 15 × 24?"},

{"role": "assistant", "content": "15 × 24 = 360"}

]

### Thinking mode - show reasoning process

thinking_messages = [

{"role": "user", "content": "What is 15 × 24?"},

{"role": "assistant", "content": "<|thinking|>\nI need to multiply 15 by 24. Let me break this down:\n15 × 24 = 15 × (20 + 4) = (15 × 20) + (15 × 4) = 300 + 60 = 360\n</|thinking|>\n\n15 × 24 = 360"}

]

### Apply templates

standard_formatted = tokenizer.apply_chat_template(standard_messages, tokenize=False)

thinking_formatted = tokenizer.apply_chat_template(thinking_messages, tokenize=False)

print("Standard mode:")

print(standard_formatted)

print("\nThinking mode:")

print(thinking_formatted)

Training with Thinking Mode

When preparing datasets with thinking mode, you can control whether to include the reasoning:

def create_thinking_example(question, answer, reasoning=None):

"""Create a training example with optional thinking"""

if reasoning:

assistant_content = f"<|thinking|>\n{reasoning}\n</|thinking|>\n\n{answer}"

else:

assistant_content = answer

return [

{"role": "user", "content": question},

{"role": "assistant", "content": assistant_content}

]

### Example usage

math_example = create_thinking_example(

question="What is the derivative of x²?",

answer="The derivative of x² is 2x",

reasoning="Using the power rule: d/dx(x^n) = n·x^(n-1)\nFor x²: n=2, so d/dx(x²) = 2·x^(2-1) = 2x"

)

Modern chat templates support tool usage and function calling. Here’s how to work with tools in SmolLM3:

### Define available tools

tools = [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get the current weather for a location",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g. San Francisco, CA"

},

"unit": {

"type": "string",

"enum": ["celsius", "fahrenheit"],

"description": "The temperature unit"

}

},

"required": ["location"]

}

}

},

{

"type": "function",

"function": {

"name": "calculate",

"description": "Perform mathematical calculations",

"parameters": {

"type": "object",

"properties": {

"expression": {

"type": "string",

"description": "Mathematical expression to evaluate"

}

},

"required": ["expression"]

}

}

}

]

### Conversation with tool usage

messages = [

{"role": "system", "content": "You are a helpful assistant with access to tools."},

{"role": "user", "content": "What's the weather like in Paris?"},

{

"role": "assistant",

"content": "I'll check the weather in Paris for you.",

"tool_calls": [

{

"id": "call_1",

"type": "function",

"function": {

"name": "get_weather",

"arguments": '{"location": "Paris, France", "unit": "celsius"}'

}

}

]

},

{

"role": "tool",

"tool_call_id": "call_1",

"content": '{"temperature": 22, "condition": "sunny", "humidity": 60}'

},

{

"role": "assistant",

"content": "The weather in Paris is currently sunny with a temperature of 22°C and 60% humidity. It's a beautiful day!"

}

]

### Apply chat template with tools

formatted_with_tools = tokenizer.apply_chat_template(

messages,

tools=tools,

tokenize=False,

add_generation_prompt=False

)

print("Chat template with tools:")

print(formatted_with_tools)

The output of the chat template with tools is:

Chat template with tools:

<|im_start|>system

#### Metadata

Knowledge Cutoff Date: June 2025

Today Date: 01 September 2025

Reasoning Mode: /think

#### Custom Instructions

You are a helpful assistant with access to tools.

##### Tools

You may call one or more functions to assist with the user query.

You are provided with function signatures within <tools></tools> XML tags:

<tools>

{'type': 'function', 'function': {'name': 'get_weather', 'description': 'Get the current weather for a location', 'parameters': {'type': 'object', 'properties': {'location': {'type': 'string', 'description': 'The city and state, e.g. San Francisco, CA'}, 'unit': {'type': 'string', 'enum': ['celsius', 'fahrenheit'], 'description': 'The temperature unit'}}, 'required': ['location']}}}

{'type': 'function', 'function': {'name': 'calculate', 'description': 'Perform mathematical calculations', 'parameters': {'type': 'object', 'properties': {'expression': {'type': 'string', 'description': 'Mathematical expression to evaluate'}}, 'required': ['expression']}}}

</tools>

For each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:

<tool_call>

{"name": <function-name>, "arguments": <args-json-object>}

...

{"temperature": 22, "condition": "sunny", "humidity": 60}<|im_end|>

<|im_start|>assistant

The weather in Paris is currently sunny with a temperature of 22°C and 60% humidity. It's a beautiful day!<|im_end|>

def format_tool_dataset(examples):

"""Format dataset with tool usage for training"""

formatted_texts = []

for messages, tools in zip(examples["messages"], examples.get("tools", [None] * len(examples["messages"]))):

formatted_text = tokenizer.apply_chat_template(

messages,

tools=tools,

tokenize=False,

add_generation_prompt=False

)

formatted_texts.append(formatted_text)

return {"text": formatted_texts}

Advanced Template Customization

For advanced use cases, you might need to customize or understand chat templates more deeply:

Inspecting a Model’s Chat Template

### View the actual template

print("SmolLM3 Chat Template:")

print(tokenizer.chat_template)

### See what special tokens are used

print("\nSpecial tokens:")

print(f"BOS: {tokenizer.bos_token}")

print(f"EOS: {tokenizer.eos_token}")

print(f"UNK: {tokenizer.unk_token}")

print(f"PAD: {tokenizer.pad_token}")

### Check for custom tokens

special_tokens = tokenizer.special_tokens_map

for name, token in special_tokens.items():

print(f"{name}: {token}")

Custom Template Creation

"""

...

### Apply custom template (be very careful with this!)

### tokenizer.chat_template = custom_template

Template Debugging

def debug_chat_template(messages, tokenizer):

"""Debug chat template application"""

### Apply template

formatted = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

### Tokenize and decode to see actual tokens

tokens = tokenizer(formatted, return_tensors="pt")

print("=== TEMPLATE DEBUG ===")

print(f"Input messages: {len(messages)}")

print(f"Formatted length: {len(formatted)} chars")

print(f"Token count: {tokens['input_ids'].shape[1]}")

print("\nFormatted text:")

print(repr(formatted)) ### Shows escape characters

print("\nTokens:")

print(tokens['input_ids'][0].tolist()[:20], "...") ### First 20 tokens

print("\nDecoded tokens:")

for i, token_id in enumerate(tokens['input_ids'][0][:20]):

token = tokenizer.decode([token_id])

print(f"{i:2d}: {token_id:5d} -> {repr(token)}")

### Example usage

debug_messages = [

{"role": "user", "content": "Hello!"},

{"role": "assistant", "content": "Hi there!"}

]

debug_chat_template(debug_messages, tokenizer)

Key Takeaways

Understanding chat templates is crucial for effective instruction tuning. Here are the essential points to remember:

Core Concepts

- Template Consistency: Always use the same template format for training and inference - mismatches can significantly hurt performance

- Generation Prompts: Use

add_generation_prompt=True for inference, False for training data preparation - Role Structure: Clear role definitions (

system, user, assistant, tool) help models understand conversation flow - Context Management: Leverage SmolLM3’s extended context window efficiently by managing conversation history

- Special Token Handling: Let templates handle special tokens - avoid adding them manually

Advanced Features

- Dual-Mode Reasoning: Use

<|thinking|> tags for complex problems requiring step-by-step reasoning - Message Continuation: Use

continue_final_message=True for structured output and prefilling responses - Tool Integration: Modern templates support function calling and tool usage for enhanced capabilities

- Pipeline Automation: Text generation pipelines handle templates automatically for production use

- Multi-Dataset Training: Standardize different dataset formats before combining for training

Training Best Practices

- Dataset Preparation: Apply templates with

add_generation_prompt=False and add_special_tokens=False for training - Quality Control: Debug templates thoroughly to ensure proper formatting

- Performance Monitoring: Incorrect template usage can significantly impact model performance

- Multimodal Support: Templates extend to vision and audio models with appropriate modifications

Common Pitfalls to Avoid

- Template mismatch: Using a different template than the model was trained on.

- Double special tokens: Adding special tokens when the template already includes them.

- Missing system messages: Not providing enough context for consistent model behavior.

- Inconsistent formatting: Mixing different conversation formats in the same dataset.

- Wrong generation prompts: Using incorrect

add_generation_prompt settings for your use case. - Ignoring tool syntax: Not properly formatting tool calls and responses.

- Context overflow: Not managing long conversations within token limits.

Production Considerations

- Pipeline usage: Use automated pipelines for consistent template application in production.

- Error handling: Implement validation for message formats and role sequences.

- Performance optimization: Cache formatted templates when possible for repeated use.

- Monitoring: Track template application success rates and formatting consistency.

- Version control: Maintain template versions alongside model versions for reproducibility.

Beyond Basic Templates: Advanced Topics

This guide covered the fundamentals, but chat templates support many advanced features:

- Multimodal templates: Handling images, audio, and video in conversations.

- Document integration: Including external documents and knowledge bases.

- Custom template creation: Building specialized templates for domain-specific applications.

- Template optimization: Performance tuning for high-throughput applications.

For these advanced topics, refer to the specialized documentation linked below.

Next Steps

Now that you have a comprehensive understanding of chat templates, you’re ready to learn about supervised fine-tuning, where we’ll use these templates to train SmolLM3 on custom datasets.

Next: Supervised Fine-Tuning

Comprehensive Resources and Further Reading

Official Documentation

Model and Dataset Resources

Technical References

Supervised Fine-Tuning with SmolLM3

Supervised Fine-Tuning (SFT) is the cornerstone of instruction tuning - it’s how we transform a base language model into an instruction-following assistant. In this section, you’ll learn to fine-tune SmolLM3 using real-world datasets and production-ready tools.

What is Supervised Fine-Tuning?

SFT is the process of continuing to train a pre-trained model on task-specific datasets with labeled examples. Think of it as specialized education:

- Pre-training teaches the model general language understanding (like learning to read).

- Supervised fine-tuning teaches specific skills and behaviors (like learning to do a specific task).

The key insight behind SFT is that we’re not teaching the model new knowledge from scratch. Instead, we’re reshaping how existing knowledge is applied. The pre-trained model already understands language, grammar, and has absorbed vast amounts of factual information. SFT focuses this general capability toward specific application patterns, response styles, and task-specific requirements.

This approach is effective because it leverages the rich representations learned during pre-training while requiring significantly less computational resources than training from scratch. The model learns to recognize instruction patterns, maintain conversation context, follow safety guidelines, and generate responses in desired formats.

[!TIP] Before starting SFT, consider whether using an existing instruction-tuned model with well-crafted prompts would suffice for your use case. SFT involves significant computational resources and engineering effort, so it should only be pursued when prompting existing models proves insufficient. Learn more about this decision process in the Hugging Face LLM Course.

The SmolLM3 SFT Journey

SmolLM3’s instruction-following capabilities come from a sophisticated SFT process:

- Base Model (

SmolLM3-3B-Base): Trained on 11T tokens of general text - SFT Training: Fine-tuned on curated instruction datasets including SmolTalk2

- Preference Alignment: Further refined using techniques like APO (Anchored Preference Optimization)

This multi-stage approach creates a model that’s both knowledgeable and helpful.

Why SFT Works: The Science Behind It

SFT is effective because it leverages the rich representations learned during pre-training while adapting the model’s behavior patterns. During SFT, the model’s parameters are fine-tuned through gradient descent on task-specific examples, causing subtle but important changes in how the model processes and generates text.

Specifically, the process works through several key mechanisms:

Behavioral Adaptation: The model learns to recognize instruction patterns and respond appropriately. This involves updating the attention mechanisms to focus on instruction cues in language and adjusting the output distribution to favor the desired responses. Research has shown that instruction tuning primarily affects the model’s surface-level behavior rather than its underlying knowledge (Wei et al., 2021).

Task Specialization: Rather than learning entirely new concepts, the model learns to apply its existing knowledge in specific contexts. This is why SFT is much more efficient than pre-training - we’re refining existing capabilities rather than building them from scratch. Studies indicate that most of the factual knowledge comes from pre-training, while SFT teaches the model how to format and present this knowledge appropriately (Ouyang et al., 2022).

Safety Alignment: Through exposure to carefully curated examples, the model learns to be more helpful, harmless, and honest. This involves both learning what to say and what not to say in various situations. The effectiveness of this approach has been demonstrated in works like InstructGPT (Ouyang et al., 2022) and Constitutional AI (Bai et al., 2022).

[!TIP] SFT doesn’t teach new facts - it teaches new behaviors. The model already knows about the world from pre-training; SFT teaches it how to be a helpful assistant using that knowledge.

The mathematical foundation involves minimizing the cross-entropy loss between the model’s predictions and the target responses in your training dataset. This process gradually shifts the model’s probability distributions to favor the types of responses demonstrated in your training examples.

When to Use Supervised Fine-Tuning

The key question is: “Does my use case require behavior that differs significantly from general-purpose conversation?” If yes, SFT is likely beneficial.

Decision framework: Use this checklist to determine if SFT is appropriate for your project:

- Have you tried prompt engineering with existing instruction-tuned models?

- Do you need consistent output formats that prompting cannot achieve?

- Is your domain specialized enough that general models struggle?

- Do you have high-quality training data (at least 1,000 examples)?

- Do you have the computational resources for training and evaluation?

If you answered “yes” to most of these, SFT is likely worth pursuing.

The SFT Process

Now let’s move on to the process of SFT itself. The SFT process follows a systematic approach that ensures high-quality results:

1. Dataset Preparation and Selection

The quality of your training data is the most critical factor for successful SFT. Unlike pre-training where quantity often matters most, SFT prioritizes quality and relevance. Your dataset should contain input-output pairs that demonstrate exactly the behavior you want your model to learn.

Choose the Right Dataset:

- SmolTalk2: The dataset used to train SmolLM3, containing high-quality instruction-response pairs.

- Domain-specific datasets: For specialized applications (medical, legal, technical).

- Custom datasets: Your own curated examples for specific use cases.

Each training example should consist of:

- Input prompt: The user’s instruction or question

- Expected response: The ideal assistant response

- Context (optional): Any additional information needed

[!TIP] Dataset size guidelines:

- Minimum: 1,000 high-quality examples for basic fine-tuning.

- Recommended: 10,000+ examples for robust performance.

- Quality over quantity: 1,000 well-curated examples often outperform 10,000 mediocre ones.

Remember: Your model will learn to mimic the patterns in your training data, so invest time in data curation.

2. Environment Setup and Configuration

To set up an environment for SFT, we will need advance compute resources. We have three main options:

- Local GPU: If you are lucky enough to have a access to a GPU with (at least 16GB of VRAM), you can train your model locally!

- Hugging Face Jobs: If you don’t have a GPU and don’t want to use a cloud provider, you can use Hugging Face Jobs! We’ll go into more detail about this in the next section.

- Notebook GPUs: If you like to use a notebook provider like Google Colab, you can use their GPUs!

- Cloud GPU: If you want to take control of your compute resources, you can use a cloud provider like AWS, GCP, or Azure.

In terms of hardware requirements, you will need a GPU with at least 16GB of VRAM, for example an Nvidia RTX 4080 or A10G.

3. Training Configuration

Choosing the right hyperparameters is crucial for successful SFT. The goal is to find the sweet spot where the model learns effectively without overfitting or becoming unstable. Here’s a detailed breakdown of each parameter and how to choose them:

Key Hyperparameters:

Learning Rate (5e-5 to 1e-4): Controls how much the model weights change with each update

- Start with 5e-5 for SmolLM3; this is conservative and stable.

- Too high: The model becomes unstable; loss oscillates or explodes.

- Too low: The model learns very slowly and may not converge in reasonable time.

Batch Size (4-16): Number of examples processed simultaneously

- Larger batches: More stable gradients, but require more GPU memory.

- Smaller batches: Less memory usage, but noisier gradients.

- Use gradient accumulation to achieve larger effective batch sizes.

Max Sequence Length (2048-4096): Maximum tokens per training example

- Longer sequences: Can handle more complex conversations.

- Shorter sequences: Faster training, less memory usage.

- Match your use case: Use the typical length of your target conversations.

Training Steps (1000-5000): Total number of parameter updates

- Depends on dataset size: More data usually requires more steps.

- Monitor validation loss: Stop when it stops improving.

- Rule of thumb: Three to five epochs through your dataset.

Warmup Steps (10% of total): Gradual learning rate increase at start

- Prevents early instability: Helps the model adapt gradually.

- Typical range: 100-500 steps for most SFT tasks.

[!TIP] Hyperparameter starting points for SmolLM3:

To bootstrap your training, you can use the following hyperparameters:

Learning Rate:

### Conservative (stable, slower)

learning_rate = 5e-5

### Balanced (recommended)

learning_rate = 1e-4

### Aggressive (faster, less stable)

learning_rate = 2e-4

Batch Size:

We can reduce GPU device batch size by using gradient accumulation.

### Limited GPU Memory

per_device_train_batch_size = 2

gradient_accumulation_steps = 8

### Balanced GPU Memory

per_device_train_batch_size = 4

gradient_accumulation_steps = 4

### More GPU Memory

per_device_train_batch_size = 8

gradient_accumulation_steps = 2

Max Sequence Length:

### Very short sequences

max_length = 512

### Short sequences

max_length = 1024

### Long sequences

max_length = 2048

### Very long sequences

max_length = 4096

4. Monitoring and Evaluation

Effective monitoring is crucial for successful SFT. Unlike pre-training where you primarily watch loss decrease, SFT requires careful attention to both quantitative metrics and qualitative outputs. The goal is to ensure your model is learning the desired behaviors without overfitting or developing unwanted patterns.

Key Metrics to Monitor:

Training Loss: Should decrease steadily but not too rapidly

- Healthy pattern: Smooth, gradual decrease.

- Warning signs: Sudden spikes, oscillations, or plateaus.

- Typical range: Starts around 2-4, should decrease to 0.5-1.5.

Validation Loss: Most important metric for preventing overfitting

- Should track training loss: A small gap indicates good generalization.

- Growing gap: Sign of overfitting; the model may be memorizing training data.

- Use for early stopping: Stop training when validation loss stops improving.

Sample Outputs: Regular qualitative checks are essential

- Generate responses: Test the model on held-out prompts during training.

- Check format consistency: Ensure the model follows desired response patterns.

- Monitor for degradation: Watch for repetitive or nonsensical outputs.

Resource Usage: Track GPU memory and training speed

- Memory spikes: May indicate batch size is too large.

- Slow training: Could suggest inefficient data loading or processing.

Understanding Loss Patterns in SFT

Training loss typically follows three distinct phases, as illustrated in this example from the Hugging Face LLM Course:

- Initial Sharp Drop: Rapid adaptation to new data distribution

- Gradual Stabilization: Learning rate slows as model fine-tunes

- Convergence: Loss values stabilize, indicating training completion

Healthy Training Pattern: The key indicator of successful training is a small gap between training and validation loss, suggesting the model is learning generalizable patterns rather than memorizing specific examples.

Warning Signs to Watch For

Several patterns in the loss curves can indicate potential issues:

Overfitting Pattern

If validation loss increases while training loss continues to decrease, your model is overfitting. Consider:

- Reducing training steps or epochs

- Increasing dataset size or diversity

- Adding regularization techniques

- Using early stopping based on validation loss

Underfitting Pattern

If loss doesn’t show significant improvement, the model might be:

- Learning too slowly (try increasing learning rate)

- Struggling with task complexity (check data quality)

- Hitting architectural limitations (consider different model size)

Potential Memorization

Extremely low loss values could suggest memorization rather than learning. This is concerning if:

- Model performs poorly on new, similar examples

- Outputs lack diversity or creativity

- Responses are too similar to training examples

[!TIP] Learn more about loss interpretation in the Hugging Face LLM Course.

Experiment Tracking with Trackio: For comprehensive experiment tracking, we recommend Trackio - a lightweight, free experiment tracking library built on Hugging Face infrastructure. Trackio provides:

- Drop-in replacement: API compatible with

wandb.init, wandb.log, and wandb.finish. - Local-first design: Dashboard runs locally by default, with optional Hugging Face Spaces hosting.

- Free hosting: Everything, including hosting on Hugging Face Spaces, is free.

- Lightweight: Fewer than 3,000 lines of Python code, easily extensible.

We can track any metrics during training, for example:

### Simple Trackio integration

import trackio

### Initialize tracking

trackio.init(project="smollm3-sft")

### Log metrics during training

trackio.log({"train_in_loss": 0.5, "learning_rate": 5e-5})

### Finish tracking

trackio.finish()

The most convenient way to track your training is to use trackio’s transformers integration. You can specify your Trackio project name and space ID using environment variables:

export TRACKIO_PROJECT_NAME="my-project"

export TRACKIO_SPACE_ID="username/space_id"

Or you can set them in your code:

import os

os.environ["TRACKIO_PROJECT_NAME"] = "my-project"

os.environ["TRACKIO_SPACE_ID"] = "username/space_id"

Then you can use the SFTTrainer class from TRL to track your training and let it handle the tracking for you.

from trl import SFTTrainer

trainer = SFTTrainer(

model=model,

train_dataset=dataset["train"],

args=config,

)

Trackio will serve an application with the metrics from training that looks like this:

Logged metrics

While training and evaluating we record the following reward metrics:

global_step: The total number of optimizer steps taken so far.epoch: The current epoch number, based on dataset iteration.num_tokens: The total number of tokens processed so far.loss: The average cross-entropy loss computed over non-masked tokens in the current logging interval.entropy: The average entropy of the model’s predicted token distribution over non-masked tokens.mean_token_accuracy: The proportion of non-masked tokens for which the model’s top-1 prediction matches the ground truth token.learning_rate: The current learning rate, which may change dynamically if a scheduler is used.grad_norm: The L2 norm of the gradients, computed before gradient clipping.

SFT supports both language modeling and prompt-completion datasets. The [SFTTrainer] is compatible with both standard and conversational dataset formats. When provided with a conversational dataset, the trainer will automatically apply the chat template to the dataset.

### Standard language modeling

{"text": "The sky is blue."}

### Conversational language modeling

{"messages": [{"role": "user", "content": "What color is the sky?"},

{"role": "assistant", "content": "It is blue."}]}

### Standard prompt-completion

{"prompt": "The sky is",

"completion": " blue."}

### Conversational prompt-completion

{"prompt": [{"role": "user", "content": "What color is the sky?"}],

"completion": [{"role": "assistant", "content": "It is blue."}]}

If your dataset is not in one of these formats, you can preprocess it to convert it into the expected format. Here is an example with the FreedomIntelligence/medical-o1-reasoning-SFT dataset:

from datasets import load_dataset

dataset = load_dataset("FreedomIntelligence/medical-o1-reasoning-SFT", "en")

def preprocess_function(example):

return {

"prompt": [{"role": "user", "content": example["Question"]}],

"completion": [

{"role": "assistant", "content": f"<think>{example['Complex_CoT']}</think>{example['Response']}"}

],

}

dataset = dataset.map(preprocess_function, remove_columns=["Question", "Response", "Complex_CoT"])

print(next(iter(dataset["train"])))

{

"prompt": [

{

"content": "Given the symptoms of sudden weakness in the left arm and leg, recent long-distance travel, and the presence of swollen and tender right lower leg, what specific cardiac abnormality is most likely to be found upon further evaluation that could explain these findings?",

"role": "user",

}

],

"completion": [

{

"content": "<think>Okay, let's see what's going on here. We've got sudden weakness [...] clicks into place!</think>The specific cardiac abnormality most likely to be found in [...] the presence of a PFO facilitating a paradoxical embolism.",

"role": "assistant",

}

],

}

Chat Templates in Training

We’ll return briefly to chat templates in the context of training. Using chat templates correctly during training is crucial for model performance. Here are the key considerations and best practices:

Preprocessing and tokenization

During training, each example is expected to contain a text field or a (prompt, completion) pair, depending on the dataset format. For more details on the expected formats, see Dataset formats. The SFTTrainer tokenizes each input using the model’s tokenizer. If both prompt and completion are provided separately, they are concatenated before tokenization.

Computing the loss

The loss used in SFT is the token-level cross-entropy loss, defined as:

\[\mathcal{L}_{\text{SFT}}(\theta) = - \sum_{t=1}^{T} \log p_\theta(y_t \mid y_{<t}),\]where \( y_t \) is the target token at timestep \( t \), and the model is trained to predict the next token given the previous ones. In practice, padding tokens are masked out during loss computation.

TRL is the go-to toolkit for training language models, built specifically for instruction tuning and alignment. It’s what we’ll use throughout this course.

Why TRL?

- Production ready: Used by major organizations and research labs.

- Comprehensive: Supports SFT, DPO, ORPO, PPO, and more advanced techniques.

- Efficient: Optimized for memory usage and training speed.

- Flexible: Works with any Hugging Face model.

- CLI support: Command-line tools for scalable training workflows.

Key Components

- SFTTrainer: The core class for supervised fine-tuning

- SFTConfig: Configuration management for training parameters

- CLI Tools: Command-line interface for production workflows

- Integration: Seamless integration with Hugging Face Hub, Trackio, Weights & Biases, and more

TRL’s Architecture

TRL is built on top of the Hugging Face ecosystem:

- Transformers: Model loading and inference.

- Datasets: Data processing and management.

- Accelerate: Distributed training and optimization.

- PEFT: Parameter-efficient fine-tuning (LoRA, QLoRA).

This integrated approach means you get all the benefits of the Hugging Face ecosystem while using state-of-the-art training techniques.

[!TIP] TRL versus other training libraries:

- TRL: Specialized for LLM training, built for instruction tuning.

- Transformers Trainer: General purpose, suitable for basic fine-tuning.

- DeepSpeed: Focuses on large-scale distributed training.

- Accelerate: Provides low-level distributed training primitives.

TRL provides the best balance of ease-of-use and advanced features for SFT. For more details on training approaches, see the Hugging Face LLM Course.

Hands-On: Your First SmolLM3 Fine-Tune

Ready to put theory into practice? Here’s a preview of what you’ll build in the exercises. You can use either Python or CLI approach:

```python from transformers import AutoModelForCausalLM, AutoTokenizer from trl import SFTTrainer, SFTConfig from datasets import load_dataset import trackio as wandb ### Initialize experiment tracking wandb.init(project="smollm3-sft", name="my-first-sft-run") ### Load SmolLM3 base model model = AutoModelForCausalLM.from_pretrained("HuggingFaceTB/SmolLM3-3B-Base") tokenizer = AutoTokenizer.from_pretrained("HuggingFaceTB/SmolLM3-3B-Base") ### Load SmolTalk2 dataset dataset = load_dataset("HuggingFaceTB/smoltalk2_everyday_convs_think") ### Configure training with Trackio integration config = SFTConfig( output_dir="./smollm3-finetuned", per_device_train_batch_size=4, learning_rate=5e-5, max_steps=1000, report_to="trackio", ### Enable Trackio logging ) ### Train! trainer = SFTTrainer( model=model, train_dataset=dataset["train"], args=config, ) trainer.train() ``` ```bash ### Fine-tune SmolLM3 using TRL CLI with Trackio tracking trl sft \ --model_name_or_path HuggingFaceTB/SmolLM3-3B-Base \ --dataset_name HuggingFaceTB/smoltalk2_everyday_convs_think \ --output_dir ./smollm3-sft-model \ --per_device_train_batch_size 4 \ --learning_rate 5e-5 \ --max_steps 1000 \ --logging_steps 50 \ --save_steps 200 \ --report_to trackio \ --push_to_hub \ --hub_model_id your-username/smollm3-custom ``` Severless Training Options

While you can train models locally, cloud infrastructure offers significant advantages for SFT training. For users who want to skip the complexity of GPU setup and environment management, Hugging Face Jobs provides a seamless solution.

See Training with Hugging Face Jobs for fully managed cloud infrastructure with high-end GPUs, automatic scaling, and integrated monitoring.

Key Takeaways

- SFT is Essential: It’s the bridge between base models and instruction-following assistants

- Data Quality Matters: High-quality datasets lead to better fine-tuned models - invest time in curation

- Monitor Carefully: Watch both loss curves and actual outputs to catch issues early

- TRL Simplifies Everything: From research to production, TRL provides the tools you need

- SmolLM3 is Perfect for Learning: Powerful enough to be useful, small enough to be accessible

- Multiple Approaches: Both programmatic and CLI workflows for different use cases

[!TIP] 🎓 Continue Learning: This introduction covers the fundamentals, but SFT is a deep topic. For more advanced techniques, evaluation methods, and troubleshooting tips, explore the Hugging Face LLM Course which provides comprehensive coverage of modern LLM training techniques.

Next Steps

Now that you understand the theory, choose your training approach:

Training with Hugging Face Jobs - Use cloud infrastructure for training Hands-On Exercises - Fine-tune your own SmolLM3 model locally or in the cloud

Resources and Further Reading

LoRA and PEFT: Efficient Fine-Tuning

Parameter-Efficient Fine-Tuning (PEFT) lets you adapt large models by training a small number of additional parameters while keeping the base model frozen. The most widely used PEFT method is LoRA (Low-Rank Adaptation), which injects trainable low-rank updates into linear layers. This often reduces trainable parameters by ~90% while preserving performance.

When to use PEFT

- You have limited compute or memory budget

- You need to quickly adapt a base model to multiple tasks/domains

- You want fast iteration and small artifacts (adapter weights are usually a few MB)

Understanding LoRA

LoRA has become the most widely adopted PEFT method. It works by adding small rank decomposition matrices to the attention weights, typically reducing trainable parameters by about 90%.

LoRA (Low-Rank Adaptation) is a parameter-efficient fine-tuning technique that freezes the pre-trained model weights and injects trainable rank decomposition matrices into the model’s layers. Instead of training all model parameters during fine-tuning, LoRA decomposes the weight updates into smaller matrices through low-rank decomposition, significantly reducing the number of trainable parameters while maintaining model performance. For example, when applied to GPT-3 175B, LoRA reduced trainable parameters by 10,000x and GPU memory requirements by 3x compared to full fine-tuning. You can read more about LoRA in the LoRA paper.

LoRA works by adding pairs of rank decomposition matrices to transformer layers, typically focusing on attention weights. During inference, these adapter weights can be merged with the base model, resulting in no additional latency overhead. LoRA is particularly useful for adapting large language models to specific tasks or domains while keeping resource requirements manageable.

Loading LoRA Adapters

Adapters can be loaded onto a pretrained model with load_adapter(), which is useful for trying out different adapters whose weights aren’t merged. Set the active adapter weights with the set_adapter() function. To return the base model, you could use unload() to unload all of the LoRA modules. This makes it easy to switch between different task-specific weights.

from transformers import AutoModelForCausalLM

from peft import PeftModel

base_model = AutoModelForCausalLM.from_pretrained("<base_model_name>")

peft_model_id = "<peft_adapter_id>"

model = PeftModel.from_pretrained(base_model, peft_model_id)

Merging LoRA Adapters

After training with LoRA, you might want to merge the adapter weights back into the base model for easier deployment. This creates a single model with the combined weights, eliminating the need to load adapters separately during inference.

The merging process requires attention to memory management and precision. Since you’ll need to load both the base model and adapter weights simultaneously, ensure sufficient GPU/CPU memory is available. Using device_map="auto" in transformers will help with automatic memory management. Maintain consistent precision (e.g., float16) throughout the process, matching the precision used during training and saving the merged model in the same format for deployment. Before deploying, always validate the merged model by comparing its outputs and performance metrics with the adapter-based version.

Adapters are also be convenient for switching between different tasks or domains. You can load the base model and adapter weights separately. This allows for quick switching between different task-specific weights.

[!TIP] When implementing PEFT methods, start with small rank values (4-8) for LoRA and monitor training loss. Use validation sets to prevent overfitting and compare results with full fine-tuning baselines when possible. The effectiveness of different methods can vary by task, so experimentation is key.

OLoRA

OLoRA utilizes QR decomposition to initialize the LoRA adapters. OLoRA translates the base weights of the model by a factor of their QR decompositions, i.e., it mutates the weights before performing any training on them. This approach significantly improves stability, accelerates convergence speed, and ultimately achieves superior performance.

Using TRL with PEFT

PEFT methods can be combined with TRL (Transformers Reinforcement Learning) for efficient fine-tuning. This integration is particularly useful for RLHF (Reinforcement Learning from Human Feedback) as it reduces memory requirements.

from peft import LoraConfig

from trl import SFTTrainer

### Load model with PEFT config

lora_config = LoraConfig(

r=16,

lora_alpha=32,

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM"

)

trainer = SFTTrainer(

model="your-model-name",

train_dataset=dataset["train"]

peft_config=lora_config

)

Basic Merging Implementation

After training a LoRA adapter, you can merge the adapter weights back into the base model. Here’s how to do it:

import torch

from transformers import AutoModelForCausalLM

from peft import PeftModel

### 1. Load the base model

base_model = AutoModelForCausalLM.from_pretrained(

"base_model_name",

dtype=torch.bfloat16,

device_map="auto"

)

### 2. Load the PEFT model with adapter

peft_model = PeftModel.from_pretrained(

base_model,

"path/to/adapter",

dtype=torch.bfloat16

)

### 3. Merge adapter weights with base model

try:

merged_model = peft_model.merge_and_unload()

except RuntimeError as e:

print(f"Merging failed: {e}")

### Implement fallback strategy or memory optimization

### 4. Save the merged model

merged_model.save_pretrained("path/to/save/merged_model")

If you encounter size discrepancies in the saved model, ensure you’re also saving the tokenizer:

### Save both model and tokenizer

tokenizer = AutoTokenizer.from_pretrained("base_model_name")

merged_model.save_pretrained("path/to/save/merged_model")

tokenizer.save_pretrained("path/to/save/merged_model")

Quick start with TRL + LoRA

The TRL SFTTrainer integrates natively with PEFT. Define a LoraConfig, pass it to the trainer, and train only the adapter weights.

from peft import LoraConfig

from trl import SFTTrainer, SFTConfig

### 1) Configure LoRA

peft_config = LoraConfig(

r=8,

lora_alpha=16,

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM",

)

### 2) Create trainer (example)

trainer = SFTTrainer(

model=model,

args=SFTConfig(output_dir="lora-adapter", num_train_epochs=1, per_device_train_batch_size=2, packing=True),

train_dataset=dataset["train"],

peft_config=peft_config,

)

trainer.train()

After training, you can either:

- Load adapters at inference time alongside the base model, or

- Merge adapters into the base model for simplified deployment.

Resources

Hands-On Exercises: Fine-Tuning SmolLM3

<CourseFloatingBanner chapter={10} classNames=”absolute z-10 right-0 top-0” notebooks={[ {label: “Google Colab”, value: “https://colab.research.google.com/github/huggingface/smol-course/blob/main/notebooks/1/4.ipynb”}, ]} />

Welcome to the practical section! Here you’ll apply everything you’ve learned about chat templates and supervised fine-tuning using SmolLM3. These exercises progress from basic concepts to advanced techniques, giving you real-world experience with instruction tuning.

Learning Objectives

By completing these exercises, you will:

- Master SmolLM3’s chat template system

- Fine-tune SmolLM3 on real datasets using both Python APIs and CLI tools

- Work with the SmolTalk2 dataset that was used to train the original model

- Compare base model vs fine-tuned model performance

- Deploy your models to Hugging Face Hub

- Understand production workflows for scaling fine-tuning

Exercise 1: Exploring SmolLM3’s Chat Templates

Objective: Understand how SmolLM3 handles different conversation formats and reasoning modes.

SmolLM3 is a hybrid reasoning model which can follow instructions or generated tokens that ‘reason’ on a complex problem. When post-trained effectively, the model will reason on hard problems and generate direct responses on easy problems.

Environment Setup

[!WARNING]

- You need a GPU with at least 8GB VRAM for training. CPU/MPS can run formatting and dataset exploration, but training larger models will likely fail.

- First run will download several GB of model weights; ensure 15GB+ free disk and a stable connection.

- If you need access to private repos, authenticate with Hugging Face Hub via

login().

Let’s start by setting up our environment.

### Install required packages (run in Colab or your environment)

pip install "transformers>=4.36.0" "trl>=0.7.0" "datasets>=2.14.0" "torch>=2.0.0"

pip install "accelerate>=0.24.0" "peft>=0.7.0" "trackio"

Then, let’s import the necessary libraries and set up the accelerator device. below we validate whether we’re using a Nvidia GPU, an Apple Metal accelerator or the CPU. In reality, we can’t train models on the CPU, so we’ll use an accelerator.

### Import necessary libraries

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

from datasets import load_dataset

import json

from typing import Optional, Dict, Any

if torch.cuda.is_available():

device = "cuda"

print(f"Using CUDA GPU: {torch.cuda.get_device_name()}")

print(f"GPU memory: {torch.cuda.get_device_properties(0).total_memory / 1e9:.1f}GB")

elif hasattr(torch.backends, 'mps') and torch.backends.mps.is_available():

device = "mps"

print("Using Apple MPS")

else:

device = "cpu"

print("Using CPU - you will need to use a GPU to train models")

### Authenticate with Hugging Face (optional, for private models)

from huggingface_hub import login

### login() ### Uncomment if you need to access private models

Take a note of the device you’re using and your available GPU memory. If this is below 8GB, you will not be able to do some exercises.

Output

```python output Using CUDA GPU: NVIDIA A100-SXM4-40GB GPU memory: 42.5GB ``` Load SmolLM3 Models

Now let’s load the base and instruct models for comparison.

### Load both base and instruct models for comparison

base_model_name = "HuggingFaceTB/SmolLM3-3B-Base"

instruct_model_name = "HuggingFaceTB/SmolLM3-3B"

### Load tokenizers

base_tokenizer = AutoTokenizer.from_pretrained(base_model_name)

instruct_tokenizer = AutoTokenizer.from_pretrained(instruct_model_name)

### Load models (use smaller precision for memory efficiency)

base_model = AutoModelForCausalLM.from_pretrained(

base_model_name,

dtype=torch.bfloat16,

device_map="auto"

)

instruct_model = AutoModelForCausalLM.from_pretrained(

instruct_model_name,

dtype=torch.bfloat16,

device_map="auto"

)

print("Models loaded successfully!")

This will download the models and tokenizers to your local machine from the Hugging Face Hub. This includes the model’s parameter weights, tokenizer, and other model configuration defined by the model authors.

Output

You should see green bars loading the model weights. This may take a few minutes. ```python output tokenizer_config.json: 50.4k/? [00:00<00:00, 5.09MB/s] tokenizer.json: 100% 17.2M/17.2M [00:02<00:00, 10.7MB/s] special_tokens_map.json: 100% 151/151 [00:00<00:00, 21.5kB/s] tokenizer_config.json: 50.4k/? [00:00<00:00, 5.45MB/s] tokenizer.json: 100% 17.2M/17.2M [00:00<00:00, 472kB/s] special_tokens_map.json: 100% 289/289 [00:00<00:00, 35.0kB/s] chat_template.jinja: 5.60k/? [00:00<00:00, 577kB/s] config.json: 100% 943/943 [00:00<00:00, 121kB/s] model.safetensors.index.json: 26.9k/? [00:00<00:00, 2.81MB/s] Fetching 2 files: 100% 2/2 [00:32<00:00, 32.11s/it] model-00001-of-00002.safetensors: 100% 4.97G/4.97G [00:31<00:00, 247MB/s] model-00002-of-00002.safetensors: 100% 1.18G/1.18G [00:17<00:00, 57.2MB/s] Loading checkpoint shards: 100% 2/2 [00:01<00:00, 1.18it/s] generation_config.json: 100% 126/126 [00:00<00:00, 17.1kB/s] config.json: 1.92k/? [00:00<00:00, 229kB/s] model.safetensors.index.json: 26.9k/? [00:00<00:00, 3.14MB/s] Fetching 2 files: 100% 2/2 [00:32<00:00, 32.38s/it] model-00002-of-00002.safetensors: 100% 1.18G/1.18G [00:17<00:00, 92.1MB/s] model-00001-of-00002.safetensors: 100% 4.97G/4.97G [00:31<00:00, 182MB/s] Loading checkpoint shards: 100% 2/2 [00:01<00:00, 1.14it/s] generation_config.json: 100% 182/182 [00:00<00:00, 21.0kB/s] Models loaded successfully! ``` Now let’s explore the chat template formatting. We will create different types of conversations to test.

### Create different types of conversations to test

conversations = {

"simple_qa": [

{"role": "user", "content": "What is machine learning?"},

],

"with_system": [

{"role": "system", "content": "You are a helpful AI assistant specialized in explaining technical concepts clearly."},

{"role": "user", "content": "What is machine learning?"},

],

"multi_turn": [

{"role": "system", "content": "You are a math tutor."},

{"role": "user", "content": "What is calculus?"},

{"role": "assistant", "content": "Calculus is a branch of mathematics that deals with rates of change and accumulation of quantities."},

{"role": "user", "content": "Can you give me a simple example?"},

],

"reasoning_task": [

{"role": "user", "content": "Solve step by step: If a train travels 120 miles in 2 hours, what is its average speed?"},

]

}

for conv_type, messages in conversations.items():

print(f"--- {conv_type.upper()} ---")

### Format without generation prompt (for completed conversations)

formatted_complete = instruct_tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=False

)

### Format with generation prompt (for inference)

formatted_prompt = instruct_tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

print("Complete conversation format:")

print(formatted_complete)

print("\nWith generation prompt:")

print(formatted_prompt)

print("\n" + "="*50 + "\n")

Output