Hugging Face LLM Course

发布时间:

This is a blog post of hugging face LLM course summary.

Hugging Face LLM Course

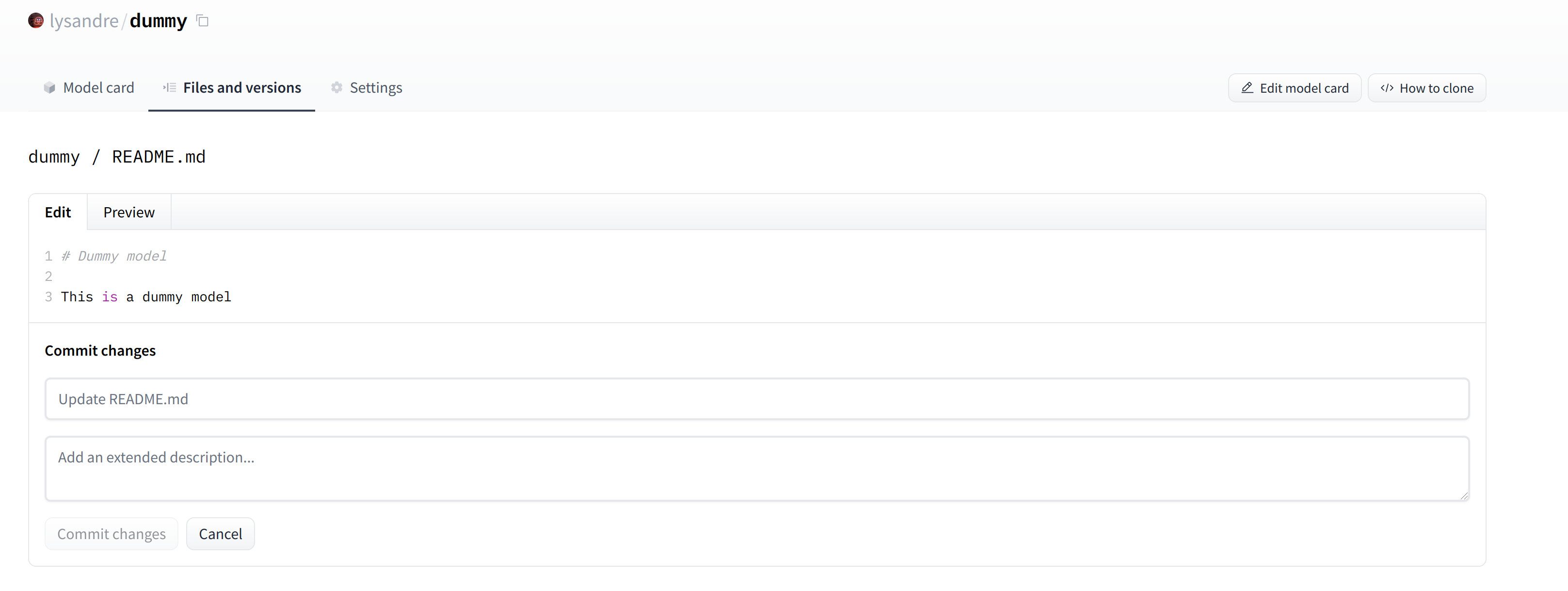

- Hugging Face LLM Course

- Chapter 0. Setup

- Chapter 1. Transformer models

- 1. Introduction

- 2. Natural Language Processing and Large Language Models

- 3. Transformers, what can they do?

- Transformers are everywhere!

- Working with pipelines

- Available pipelines for different modalities

- Zero-shot classification

- Text generation

- Using any model from the Hub in a pipeline

- Mask filling

- Named entity recognition

- Question answering

- Summarization

- Translation

- Image and audio pipelines

- Combining data from multiple sources

- Conclusion

- 4. How do Transformers work?

- 5. How 🤗 Transformers solve tasks

- 6. Transformer Architectures

- 8. Deep dive into Text Generation Inference with LLMs

- 9. Bias and limitations

- 10. Summary

- Chapter 2. Using 🤗 Transformers

- Chapter 3. Fine-tuning a pretrained model

- Chapter 4. Sharing models and tokenizers

- Chapter 5. The 🤗 Datasets library

- Chapter 6. The 🤗 Tokenizers library

- 1. Introduction

- 2. Training a new tokenizer from an old one

- 3a. Fast tokenizers’ special powers

- 3b. Fast tokenizers in the QA pipeline

- 4. Normalization and pre-tokenization

- 5. Byte-Pair Encoding tokenization

- 6. WordPiece tokenization

- 7. Unigram tokenization

- 8. Building a tokenizer, block by block

- 9. Tokenizers, check!

- Chapter 7. Classical NLP tasks

- Chapter 8. How to ask for help

- Chapter 9. Building and sharing demos

- Chapter 10. Curate high-quality datasets

- Chapter 11. Fine-tune Large Language Models

- Chapter 12. Build Reasoning Models

课程链接:Hugging Face - LLM Couese

Chapter 0. Setup

1. Introduction

Welcome to the Hugging Face course! This introduction will guide you through setting up a working environment. If you’re just starting the course, we recommend you first take a look at Chapter 1, then come back and set up your environment so you can try the code yourself.

All the libraries that we’ll be using in this course are available as Python packages, so here we’ll show you how to set up a Python environment and install the specific libraries you’ll need.

We’ll cover two ways of setting up your working environment, using a Colab notebook or a Python virtual environment. Feel free to choose the one that resonates with you the most. For beginners, we strongly recommend that you get started by using a Colab notebook.

Note that we will not be covering the Windows system. If you’re running on Windows, we recommend following along using a Colab notebook. If you’re using a Linux distribution or macOS, you can use either approach described here.

Most of the course relies on you having a Hugging Face account. We recommend creating one now: create an account.

Using a Google Colab notebook

Using a Colab notebook is the simplest possible setup; boot up a notebook in your browser and get straight to coding!

If you’re not familiar with Colab, we recommend you start by following the introduction. Colab allows you to use some accelerating hardware, like GPUs or TPUs, and it is free for smaller workloads.

Once you’re comfortable moving around in Colab, create a new notebook and get started with the setup:

The next step is to install the libraries that we’ll be using in this course. We’ll use pip for the installation, which is the package manager for Python. In notebooks, you can run system commands by preceding them with the ! character, so you can install the 🤗 Transformers library as follows:

!pip install transformers

You can make sure the package was correctly installed by importing it within your Python runtime:

import transformers

This installs a very light version of 🤗 Transformers. In particular, no specific machine learning frameworks (like PyTorch or TensorFlow) are installed. Since we’ll be using a lot of different features of the library, we recommend installing the development version, which comes with all the required dependencies for pretty much any imaginable use case:

!pip install transformers[sentencepiece]

This will take a bit of time, but then you’ll be ready to go for the rest of the course!

Using a Python virtual environment

If you prefer to use a Python virtual environment, the first step is to install Python on your system. We recommend following this guide to get started.

Once you have Python installed, you should be able to run Python commands in your terminal. You can start by running the following command to ensure that it is correctly installed before proceeding to the next steps: python --version. This should print out the Python version now available on your system.

When running a Python command in your terminal, such as python --version, you should think of the program running your command as the “main” Python on your system. We recommend keeping this main installation free of any packages, and using it to create separate environments for each application you work on — this way, each application can have its own dependencies and packages, and you won’t need to worry about potential compatibility issues with other applications.

In Python this is done with virtual environments, which are self-contained directory trees that each contain a Python installation with a particular Python version alongside all the packages the application needs. Creating such a virtual environment can be done with a number of different tools, but we’ll use the official Python package for that purpose, which is called venv.

First, create the directory you’d like your application to live in — for example, you might want to make a new directory called transformers-course at the root of your home directory:

mkdir ~/transformers-course

cd ~/transformers-course

From inside this directory, create a virtual environment using the Python venv module:

python -m venv .env

You should now have a directory called .env in your otherwise empty folder:

ls -a

. .. .env

You can jump in and out of your virtual environment with the activate and deactivate scripts:

# Activate the virtual environment

source .env/bin/activate

# Deactivate the virtual environment

deactivate

You can make sure that the environment is activated by running the which python command: if it points to the virtual environment, then you have successfully activated it!

which python

/home/<user>/transformers-course/.env/bin/python

Installing dependencies

As in the previous section on using Google Colab instances, you’ll now need to install the packages required to continue. Again, you can install the development version of 🤗 Transformers using the pip package manager:

pip install "transformers[sentencepiece]"

You’re now all set up and ready to go!

Chapter 1. Transformer models

1. Introduction

Welcome to the 🤗 Course!

This course will teach you about large language models (LLMs) and natural language processing (NLP) using libraries from the Hugging Face ecosystem — 🤗 Transformers, 🤗 Datasets, 🤗 Tokenizers, and 🤗 Accelerate — as well as the Hugging Face Hub.

We’ll also cover libraries outside the Hugging Face ecosystem. These are amazing contributions to the AI community and incredibly useful tools.

It’s completely free and without ads.

Understanding NLP and LLMs

While this course was originally focused on NLP (Natural Language Processing), it has evolved to emphasize Large Language Models (LLMs), which represent the latest advancement in the field.

What’s the difference?

- NLP (Natural Language Processing) is the broader field focused on enabling computers to understand, interpret, and generate human language. NLP encompasses many techniques and tasks such as sentiment analysis, named entity recognition, and machine translation.

- LLMs (Large Language Models) are a powerful subset of NLP models characterized by their massive size, extensive training data, and ability to perform a wide range of language tasks with minimal task-specific training. Models like the Llama, GPT, or Claude series are examples of LLMs that have revolutionized what’s possible in NLP.

Throughout this course, you’ll learn about both traditional NLP concepts and cutting-edge LLM techniques, as understanding the foundations of NLP is crucial for working effectively with LLMs.

What to expect?

Here is a brief overview of the course:

- Chapters 1 to 4 provide an introduction to the main concepts of the 🤗 Transformers library. By the end of this part of the course, you will be familiar with how Transformer models work and will know how to use a model from the Hugging Face Hub, fine-tune it on a dataset, and share your results on the Hub!

- Chapters 5 to 8 teach the basics of 🤗 Datasets and 🤗 Tokenizers before diving into classic NLP tasks and LLM techniques. By the end of this part, you will be able to tackle the most common language processing challenges by yourself.

- Chapter 9 goes beyond NLP to cover how to build and share demos of your models on the 🤗 Hub. By the end of this part, you will be ready to showcase your 🤗 Transformers application to the world!

- Chapters 10 to 12 dive into advanced LLM topics like fine-tuning, curating high-quality datasets, and building reasoning models.

This course:

- Requires a good knowledge of Python

- Is better taken after an introductory deep learning course, such as fast.ai’s Practical Deep Learning for Coders or one of the programs developed by DeepLearning.AI

- Does not expect prior PyTorch or TensorFlow knowledge, though some familiarity with either of those will help

After you’ve completed this course, we recommend checking out DeepLearning.AI’s Natural Language Processing Specialization, which covers a wide range of traditional NLP models like naive Bayes and LSTMs that are well worth knowing about!

Who are we?

About the authors:

Abubakar Abid completed his PhD at Stanford in applied machine learning. During his PhD, he founded Gradio, an open-source Python library that has been used to build over 600,000 machine learning demos. Gradio was acquired by Hugging Face, which is where Abubakar now serves as a machine learning team lead.

Ben Burtenshaw is a Machine Learning Engineer at Hugging Face. He completed his PhD in Natural Language Processing at the University of Antwerp, where he applied Transformer models to generate children stories for the purpose of improving literacy skills. Since then, he has focused on educational materials and tools for the wider community.

Matthew Carrigan is a Machine Learning Engineer at Hugging Face. He lives in Dublin, Ireland and previously worked as an ML engineer at Parse.ly and before that as a post-doctoral researcher at Trinity College Dublin. He does not believe we’re going to get to AGI by scaling existing architectures, but has high hopes for robot immortality regardless.

Lysandre Debut is a Machine Learning Engineer at Hugging Face and has been working on the 🤗 Transformers library since the very early development stages. His aim is to make NLP accessible for everyone by developing tools with a very simple API.

Sylvain Gugger is a Research Engineer at Hugging Face and one of the core maintainers of the 🤗 Transformers library. Previously he was a Research Scientist at fast.ai, and he co-wrote Deep Learning for Coders with fastai and PyTorch with Jeremy Howard. The main focus of his research is on making deep learning more accessible, by designing and improving techniques that allow models to train fast on limited resources.

Dawood Khan is a Machine Learning Engineer at Hugging Face. He’s from NYC and graduated from New York University studying Computer Science. After working as an iOS Engineer for a few years, Dawood quit to start Gradio with his fellow co-founders. Gradio was eventually acquired by Hugging Face.

Merve Noyan is a developer advocate at Hugging Face, working on developing tools and building content around them to democratize machine learning for everyone.

Lucile Saulnier is a machine learning engineer at Hugging Face, developing and supporting the use of open source tools. She is also actively involved in many research projects in the field of Natural Language Processing such as collaborative training and BigScience.

Lewis Tunstall is a machine learning engineer at Hugging Face, focused on developing open-source tools and making them accessible to the wider community. He is also a co-author of the O’Reilly book Natural Language Processing with Transformers.

Leandro von Werra is a machine learning engineer in the open-source team at Hugging Face and also a co-author of the O’Reilly book Natural Language Processing with Transformers. He has several years of industry experience bringing NLP projects to production by working across the whole machine learning stack..

FAQ

Here are some answers to frequently asked questions:

Does taking this course lead to a certification? Currently we do not have any certification for this course. However, we are working on a certification program for the Hugging Face ecosystem – stay tuned!

How much time should I spend on this course? Each chapter in this course is designed to be completed in 1 week, with approximately 6-8 hours of work per week. However, you can take as much time as you need to complete the course.

Where can I ask a question if I have one? If you have a question about any section of the course, just click on the “Ask a question” banner at the top of the page to be automatically redirected to the right section of the Hugging Face forums:

Note that a list of project ideas is also available on the forums if you wish to practice more once you have completed the course.

- Where can I get the code for the course? For each section, click on the banner at the top of the page to run the code in either Google Colab or Amazon SageMaker Studio Lab:

The Jupyter notebooks containing all the code from the course are hosted on the huggingface/notebooks repo. If you wish to generate them locally, check out the instructions in the course repo on GitHub.

How can I contribute to the course? There are many ways to contribute to the course! If you find a typo or a bug, please open an issue on the

courserepo. If you would like to help translate the course into your native language, check out the instructions here.What were the choices made for each translation? Each translation has a glossary and

TRANSLATING.txtfile that details the choices that were made for machine learning jargon etc. You can find an example for German here.Can I reuse this course? Of course! The course is released under the permissive Apache 2 license. This means that you must give appropriate credit, provide a link to the license, and indicate if changes were made. You may do so in any reasonable manner, but not in any way that suggests the licensor endorses you or your use. If you would like to cite the course, please use the following BibTeX:

@misc{huggingfacecourse,

author = {Hugging Face},

title = {The Hugging Face Course, 2022},

howpublished = "\url{https://huggingface.co/course}",

year = {2022},

note = "[Online; accessed <today>]"

}

Languages and translations

Thanks to our wonderful community, the course is available in many languages beyond English 🔥! Check out the table below to see which languages are available and who contributed to the translations:

| Language | Authors |

|---|---|

| French | @lbourdois, @ChainYo, @melaniedrevet, @abdouaziz |

| Vietnamese | @honghanhh |

| Chinese (simplified) | @zhlhyx, petrichor1122, @yaoqih |

| Bengali (WIP) | @avishek-018, @eNipu |

| German (WIP) | @JesperDramsch, @MarcusFra, @fabridamicelli |

| Spanish (WIP) | @camartinezbu, @munozariasjm, @fordaz |

| Persian (WIP) | @jowharshamshiri, @schoobani |

| Gujarati (WIP) | @pandyaved98 |

| Hebrew (WIP) | @omer-dor |

| Hindi (WIP) | @pandyaved98 |

| Bahasa Indonesia (WIP) | @gstdl |

| Italian (WIP) | @CaterinaBi, @ClonedOne, @Nolanogenn, @EdAbati, @gdacciaro |

| Japanese (WIP) | @hiromu166, @younesbelkada, @HiromuHota |

| Korean (WIP) | @Doohae, @wonhyeongseo, @dlfrnaos19 |

| Portuguese (WIP) | @johnnv1, @victorescosta, @LincolnVS |

| Russian (WIP) | @pdumin, @svv73 |

| Thai (WIP) | @peeraponw, @a-krirk, @jomariya23156, @ckingkan |

| Turkish (WIP) | @tanersekmen, @mertbozkir, @ftarlaci, @akkasayaz |

| Chinese (traditional) (WIP) | @davidpeng86 |

For some languages, the course YouTube videos have subtitles in the language. You can enable them by first clicking the CC button in the bottom right corner of the video. Then, under the settings icon ⚙️, you can select the language you want by selecting the Subtitles/CC option.

[!TIP] Don’t see your language in the above table or you’d like to contribute to an existing translation? You can help us translate the course by following the instructions here.

Let’s go 🚀

Are you ready to roll? In this chapter, you will learn:

- How to use the

pipeline()function to solve NLP tasks such as text generation and classification - About the Transformer architecture

- How to distinguish between encoder, decoder, and encoder-decoder architectures and use cases

2. Natural Language Processing and Large Language Models

Before jumping into Transformer models, let’s do a quick overview of what natural language processing is, how large language models have transformed the field, and why we care about it.

What is NLP?

NLP is a field of linguistics and machine learning focused on understanding everything related to human language. The aim of NLP tasks is not only to understand single words individually, but to be able to understand the context of those words.

The following is a list of common NLP tasks, with some examples of each:

- Classifying whole sentences: Getting the sentiment of a review, detecting if an email is spam, determining if a sentence is grammatically correct or whether two sentences are logically related or not

- Classifying each word in a sentence: Identifying the grammatical components of a sentence (noun, verb, adjective), or the named entities (person, location, organization)

- Generating text content: Completing a prompt with auto-generated text, filling in the blanks in a text with masked words

- Extracting an answer from a text: Given a question and a context, extracting the answer to the question based on the information provided in the context

- Generating a new sentence from an input text: Translating a text into another language, summarizing a text

NLP isn’t limited to written text though. It also tackles complex challenges in speech recognition and computer vision, such as generating a transcript of an audio sample or a description of an image.

The Rise of Large Language Models (LLMs)

In recent years, the field of NLP has been revolutionized by Large Language Models (LLMs). These models, which include architectures like GPT (Generative Pre-trained Transformer) and Llama, have transformed what’s possible in language processing.

[!TIP] A large language model (LLM) is an AI model trained on massive amounts of text data that can understand and generate human-like text, recognize patterns in language, and perform a wide variety of language tasks without task-specific training. They represent a significant advancement in the field of natural language processing (NLP).

LLMs are characterized by:

- Scale: They contain millions, billions, or even hundreds of billions of parameters

- General capabilities: They can perform multiple tasks without task-specific training

- In-context learning: They can learn from examples provided in the prompt

- Emergent abilities: As these models grow in size, they demonstrate capabilities that weren’t explicitly programmed or anticipated

The advent of LLMs has shifted the paradigm from building specialized models for specific NLP tasks to using a single, large model that can be prompted or fine-tuned to address a wide range of language tasks. This has made sophisticated language processing more accessible while also introducing new challenges in areas like efficiency, ethics, and deployment.

However, LLMs also have important limitations:

- Hallucinations: They can generate incorrect information confidently

- Lack of true understanding: They lack true understanding of the world and operate purely on statistical patterns

- Bias: They may reproduce biases present in their training data or inputs.

- Context windows: They have limited context windows (though this is improving)

- Computational resources: They require significant computational resources

Why is language processing challenging?

Computers don’t process information in the same way as humans. For example, when we read the sentence “I am hungry,” we can easily understand its meaning. Similarly, given two sentences such as “I am hungry” and “I am sad,” we’re able to easily determine how similar they are. For machine learning (ML) models, such tasks are more difficult. The text needs to be processed in a way that enables the model to learn from it. And because language is complex, we need to think carefully about how this processing must be done. There has been a lot of research done on how to represent text, and we will look at some methods in the next chapter.

Even with the advances in LLMs, many fundamental challenges remain. These include understanding ambiguity, cultural context, sarcasm, and humor. LLMs address these challenges through massive training on diverse datasets, but still often fall short of human-level understanding in many complex scenarios.

3. Transformers, what can they do?

In this section, we will look at what Transformer models can do and use our first tool from the 🤗 Transformers library: the pipeline() function.

[!TIP] 👀 See that Open in Colab button on the top right? Click on it to open a Google Colab notebook with all the code samples of this section. This button will be present in any section containing code examples.

If you want to run the examples locally, we recommend taking a look at the setup.

Transformers are everywhere!

Transformer models are used to solve all kinds of tasks across different modalities, including natural language processing (NLP), computer vision, audio processing, and more. Here are some of the companies and organizations using Hugging Face and Transformer models, who also contribute back to the community by sharing their models:

The 🤗 Transformers library provides the functionality to create and use those shared models. The Model Hub contains millions of pretrained models that anyone can download and use. You can also upload your own models to the Hub!

[!TIP] ⚠️ The Hugging Face Hub is not limited to Transformer models. Anyone can share any kind of models or datasets they want! Create a huggingface.co account to benefit from all available features!

Before diving into how Transformer models work under the hood, let’s look at a few examples of how they can be used to solve some interesting NLP problems.

Working with pipelines

The most basic object in the 🤗 Transformers library is the pipeline() function. It connects a model with its necessary preprocessing and postprocessing steps, allowing us to directly input any text and get an intelligible answer:

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

classifier("I've been waiting for a HuggingFace course my whole life.")

[{'label': 'POSITIVE', 'score': 0.9598047137260437}]

We can even pass several sentences!

classifier(

["I've been waiting for a HuggingFace course my whole life.", "I hate this so much!"]

)

[{'label': 'POSITIVE', 'score': 0.9598047137260437},

{'label': 'NEGATIVE', 'score': 0.9994558095932007}]

By default, this pipeline selects a particular pretrained model that has been fine-tuned for sentiment analysis in English. The model is downloaded and cached when you create the classifier object. If you rerun the command, the cached model will be used instead and there is no need to download the model again.

There are three main steps involved when you pass some text to a pipeline:

- The text is preprocessed into a format the model can understand.

- The preprocessed inputs are passed to the model.

- The predictions of the model are post-processed, so you can make sense of them.

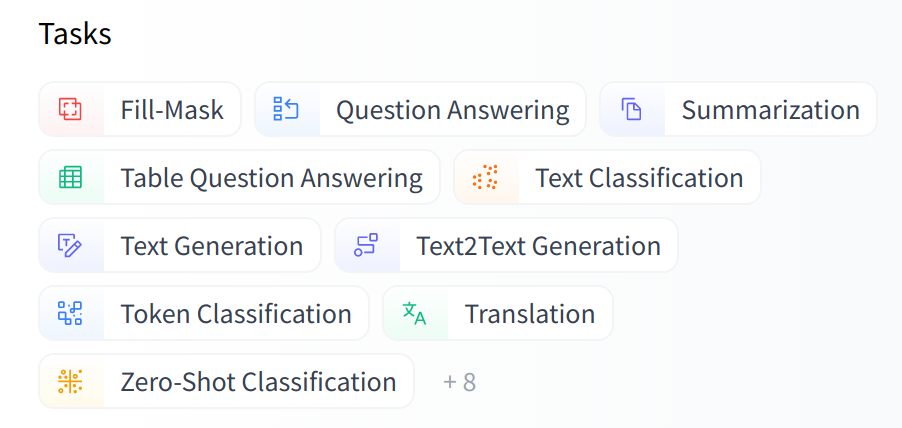

Available pipelines for different modalities

The pipeline() function supports multiple modalities, allowing you to work with text, images, audio, and even multimodal tasks. In this course we’ll focus on text tasks, but it’s useful to understand the transformer architecture’s potential, so we’ll briefly outline it.

Here’s an overview of what’s available:

[!TIP] For a full and updated list of pipelines, see the 🤗 Transformers documentation.

Text pipelines

text-generation: Generate text from a prompttext-classification: Classify text into predefined categoriessummarization: Create a shorter version of a text while preserving key informationtranslation: Translate text from one language to anotherzero-shot-classification: Classify text without prior training on specific labelsfeature-extraction: Extract vector representations of text

Image pipelines

image-to-text: Generate text descriptions of imagesimage-classification: Identify objects in an imageobject-detection: Locate and identify objects in images

Audio pipelines

automatic-speech-recognition: Convert speech to textaudio-classification: Classify audio into categoriestext-to-speech: Convert text to spoken audio

Multimodal pipelines

image-text-to-text: Respond to an image based on a text prompt

Let’s explore some of these pipelines in more detail!

Zero-shot classification

We’ll start by tackling a more challenging task where we need to classify texts that haven’t been labelled. This is a common scenario in real-world projects because annotating text is usually time-consuming and requires domain expertise. For this use case, the zero-shot-classification pipeline is very powerful: it allows you to specify which labels to use for the classification, so you don’t have to rely on the labels of the pretrained model. You’ve already seen how the model can classify a sentence as positive or negative using those two labels — but it can also classify the text using any other set of labels you like.

from transformers import pipeline

classifier = pipeline("zero-shot-classification")

classifier(

"This is a course about the Transformers library",

candidate_labels=["education", "politics", "business"],

)

{'sequence': 'This is a course about the Transformers library',

'labels': ['education', 'business', 'politics'],

'scores': [0.8445963859558105, 0.111976258456707, 0.043427448719739914]}

This pipeline is called zero-shot because you don’t need to fine-tune the model on your data to use it. It can directly return probability scores for any list of labels you want!

[!TIP] ✏️ Try it out! Play around with your own sequences and labels and see how the model behaves.

Text generation

Now let’s see how to use a pipeline to generate some text. The main idea here is that you provide a prompt and the model will auto-complete it by generating the remaining text. This is similar to the predictive text feature that is found on many phones. Text generation involves randomness, so it’s normal if you don’t get the same results as shown below.

from transformers import pipeline

generator = pipeline("text-generation")

generator("In this course, we will teach you how to")

[{'generated_text': 'In this course, we will teach you how to understand and use '

'data flow and data interchange when handling user data. We '

'will be working with one or more of the most commonly used '

'data flows — data flows of various types, as seen by the '

'HTTP'}]

You can control how many different sequences are generated with the argument num_return_sequences and the total length of the output text with the argument max_length.

[!TIP] ✏️ Try it out! Use the

num_return_sequencesandmax_lengtharguments to generate two sentences of 15 words each.

Using any model from the Hub in a pipeline

The previous examples used the default model for the task at hand, but you can also choose a particular model from the Hub to use in a pipeline for a specific task — say, text generation. Go to the Model Hub and click on the corresponding tag on the left to display only the supported models for that task. You should get to a page like this one.

Let’s try the HuggingFaceTB/SmolLM2-360M model! Here’s how to load it in the same pipeline as before:

from transformers import pipeline

generator = pipeline("text-generation", model="HuggingFaceTB/SmolLM2-360M")

generator(

"In this course, we will teach you how to",

max_length=30,

num_return_sequences=2,

)

[{'generated_text': 'In this course, we will teach you how to manipulate the world and '

'move your mental and physical capabilities to your advantage.'},

{'generated_text': 'In this course, we will teach you how to become an expert and '

'practice realtime, and with a hands on experience on both real '

'time and real'}]

You can refine your search for a model by clicking on the language tags, and pick a model that will generate text in another language. The Model Hub even contains checkpoints for multilingual models that support several languages.

Once you select a model by clicking on it, you’ll see that there is a widget enabling you to try it directly online. This way you can quickly test the model’s capabilities before downloading it.

[!TIP] ✏️ Try it out! Use the filters to find a text generation model for another language. Feel free to play with the widget and use it in a pipeline!

Inference Providers

All the models can be tested directly through your browser using the Inference Providers, which is available on the Hugging Face website. You can play with the model directly on this page by inputting custom text and watching the model process the input data.

Inference Providers that powers the widget is also available as a paid product, which comes in handy if you need it for your workflows. See the pricing page for more details.

Mask filling

The next pipeline you’ll try is fill-mask. The idea of this task is to fill in the blanks in a given text:

from transformers import pipeline

unmasker = pipeline("fill-mask")

unmasker("This course will teach you all about <mask> models.", top_k=2)

[{'sequence': 'This course will teach you all about mathematical models.',

'score': 0.19619831442832947,

'token': 30412,

'token_str': ' mathematical'},

{'sequence': 'This course will teach you all about computational models.',

'score': 0.04052725434303284,

'token': 38163,

'token_str': ' computational'}]

The top_k argument controls how many possibilities you want to be displayed. Note that here the model fills in the special <mask> word, which is often referred to as a mask token. Other mask-filling models might have different mask tokens, so it’s always good to verify the proper mask word when exploring other models. One way to check it is by looking at the mask word used in the widget.

[!TIP] ✏️ Try it out! Search for the

bert-base-casedmodel on the Hub and identify its mask word in the Inference API widget. What does this model predict for the sentence in ourpipelineexample above?

Named entity recognition

Named entity recognition (NER) is a task where the model has to find which parts of the input text correspond to entities such as persons, locations, or organizations. Let’s look at an example:

from transformers import pipeline

ner = pipeline("ner", aggregation_strategy="simple")

ner("My name is Sylvain and I work at Hugging Face in Brooklyn.")

[{'entity_group': 'PER', 'score': 0.99816, 'word': 'Sylvain', 'start': 11, 'end': 18},

{'entity_group': 'ORG', 'score': 0.97960, 'word': 'Hugging Face', 'start': 33, 'end': 45},

{'entity_group': 'LOC', 'score': 0.99321, 'word': 'Brooklyn', 'start': 49, 'end': 57}

]

Here the model correctly identified that Sylvain is a person (PER), Hugging Face an organization (ORG), and Brooklyn a location (LOC).

We pass the option aggregation_strategy="simple" in the pipeline creation function to tell the pipeline to regroup together the parts of the sentence that correspond to the same entity: here the model correctly grouped “Hugging” and “Face” as a single organization, even though the name consists of multiple words. In fact, as we will see in the next chapter, the preprocessing even splits some words into smaller parts. For instance, Sylvain is split into four pieces: S, ##yl, ##va, and ##in. In the post-processing step, the pipeline successfully regrouped those pieces.

[!TIP] ✏️ Try it out! Search the Model Hub for a model able to do part-of-speech tagging (usually abbreviated as POS) in English. What does this model predict for the sentence in the example above?

Question answering

The question-answering pipeline answers questions using information from a given context:

from transformers import pipeline

question_answerer = pipeline("question-answering")

question_answerer(

question="Where do I work?",

context="My name is Sylvain and I work at Hugging Face in Brooklyn",

)

{'score': 0.6385916471481323, 'start': 33, 'end': 45, 'answer': 'Hugging Face'}

Note that this pipeline works by extracting information from the provided context; it does not generate the answer.

Summarization

Summarization is the task of reducing a text into a shorter text while keeping all (or most) of the important aspects referenced in the text. Here’s an example:

from transformers import pipeline

summarizer = pipeline("summarization")

summarizer(

"""

America has changed dramatically during recent years. Not only has the number of

graduates in traditional engineering disciplines such as mechanical, civil,

electrical, chemical, and aeronautical engineering declined, but in most of

the premier American universities engineering curricula now concentrate on

and encourage largely the study of engineering science. As a result, there

are declining offerings in engineering subjects dealing with infrastructure,

the environment, and related issues, and greater concentration on high

technology subjects, largely supporting increasingly complex scientific

developments. While the latter is important, it should not be at the expense

of more traditional engineering.

Rapidly developing economies such as China and India, as well as other

industrial countries in Europe and Asia, continue to encourage and advance

the teaching of engineering. Both China and India, respectively, graduate

six and eight times as many traditional engineers as does the United States.

Other industrial countries at minimum maintain their output, while America

suffers an increasingly serious decline in the number of engineering graduates

and a lack of well-educated engineers.

"""

)

[{'summary_text': ' America has changed dramatically during recent years . The '

'number of engineering graduates in the U.S. has declined in '

'traditional engineering disciplines such as mechanical, civil '

', electrical, chemical, and aeronautical engineering . Rapidly '

'developing economies such as China and India, as well as other '

'industrial countries in Europe and Asia, continue to encourage '

'and advance engineering .'}]

Like with text generation, you can specify a max_length or a min_length for the result.

Translation

For translation, you can use a default model if you provide a language pair in the task name (such as "translation_en_to_fr"), but the easiest way is to pick the model you want to use on the Model Hub. Here we’ll try translating from French to English:

from transformers import pipeline

translator = pipeline("translation", model="Helsinki-NLP/opus-mt-fr-en")

translator("Ce cours est produit par Hugging Face.")

[{'translation_text': 'This course is produced by Hugging Face.'}]

Like with text generation and summarization, you can specify a max_length or a min_length for the result.

[!TIP] ✏️ Try it out! Search for translation models in other languages and try to translate the previous sentence into a few different languages.

Image and audio pipelines

Beyond text, Transformer models can also work with images and audio. Here are a few examples:

Image classification

from transformers import pipeline

image_classifier = pipeline(

task="image-classification", model="google/vit-base-patch16-224"

)

result = image_classifier(

"https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/pipeline-cat-chonk.jpeg"

)

print(result)

[{'label': 'lynx, catamount', 'score': 0.43350091576576233},

{'label': 'cougar, puma, catamount, mountain lion, painter, panther, Felis concolor',

'score': 0.034796204417943954},

{'label': 'snow leopard, ounce, Panthera uncia',

'score': 0.03240183740854263},

{'label': 'Egyptian cat', 'score': 0.02394474856555462},

{'label': 'tiger cat', 'score': 0.02288915030658245}]

Automatic speech recognition

from transformers import pipeline

transcriber = pipeline(

task="automatic-speech-recognition", model="openai/whisper-large-v3"

)

result = transcriber(

"https://huggingface.co/datasets/Narsil/asr_dummy/resolve/main/mlk.flac"

)

print(result)

{'text': ' I have a dream that one day this nation will rise up and live out the true meaning of its creed.'}

Combining data from multiple sources

One powerful application of Transformer models is their ability to combine and process data from multiple sources. This is especially useful when you need to:

- Search across multiple databases or repositories

- Consolidate information from different formats (text, images, audio)

- Create a unified view of related information

For example, you could build a system that:

- Searches for information across databases in multiple modalities like text and image.

- Combines results from different sources into a single coherent response. For example, from an audio file and text description.

- Presents the most relevant information from a database of documents and metadata.

Conclusion

The pipelines shown in this chapter are mostly for demonstrative purposes. They were programmed for specific tasks and cannot perform variations of them. In the next chapter, you’ll learn what’s inside a pipeline() function and how to customize its behavior.

4. How do Transformers work?

In this section, we will take a look at the architecture of Transformer models and dive deeper into the concepts of attention, encoder-decoder architecture, and more.

[!WARNING] 🚀 We’re taking things up a notch here. This section is detailed and technical, so don’t worry if you don’t understand everything right away. We’ll come back to these concepts later in the course.

A bit of Transformer history

Here are some reference points in the (short) history of Transformer models:

The Transformer architecture was introduced in June 2017. The focus of the original research was on translation tasks. This was followed by the introduction of several influential models, including:

June 2018: GPT, the first pretrained Transformer model, used for fine-tuning on various NLP tasks and obtained state-of-the-art results

October 2018: BERT, another large pretrained model, this one designed to produce better summaries of sentences (more on this in the next chapter!)

February 2019: GPT-2, an improved (and bigger) version of GPT that was not immediately publicly released due to ethical concerns

October 2019: T5, A multi-task focused implementation of the sequence-to-sequence Transformer architecture.

May 2020, GPT-3, an even bigger version of GPT-2 that is able to perform well on a variety of tasks without the need for fine-tuning (called zero-shot learning)

January 2022: InstructGPT, a version of GPT-3 that was trained to follow instructions better.

January 2023: Llama, a large language model that is able to generate text in a variety of languages.

March 2023: Mistral, a 7-billion-parameter language model that outperforms Llama 2 13B across all evaluated benchmarks, leveraging grouped-query attention for faster inference and sliding window attention to handle sequences of arbitrary length.

May 2024: Gemma 2, a family of lightweight, state-of-the-art open models ranging from 2B to 27B parameters that incorporate interleaved local-global attentions and group-query attention, with smaller models trained using knowledge distillation to deliver performance competitive with models 2-3 times larger.

November 2024: SmolLM2, a state-of-the-art small language model (135 million to 1.7 billion parameters) that achieves impressive performance despite its compact size, and unlocking new possibilities for mobile and edge devices.

This list is far from comprehensive, and is just meant to highlight a few of the different kinds of Transformer models. Broadly, they can be grouped into three categories:

- GPT-like (also called auto-regressive Transformer models)

- BERT-like (also called auto-encoding Transformer models)

- T5-like (also called sequence-to-sequence Transformer models)

We will dive into these families in more depth later on.

Transformers are language models

All the Transformer models mentioned above (GPT, BERT, T5, etc.) have been trained as language models. This means they have been trained on large amounts of raw text in a self-supervised fashion.

Self-supervised learning is a type of training in which the objective is automatically computed from the inputs of the model. That means that humans are not needed to label the data!

This type of model develops a statistical understanding of the language it has been trained on, but it’s less useful for specific practical tasks. Because of this, the general pretrained model then goes through a process called transfer learning or fine-tuning. During this process, the model is fine-tuned in a supervised way – that is, using human-annotated labels – on a given task.

An example of a task is predicting the next word in a sentence having read the n previous words. This is called causal language modeling because the output depends on the past and present inputs, but not the future ones.

Another example is masked language modeling, in which the model predicts a masked word in the sentence.

Transformers are big models

Apart from a few outliers (like DistilBERT), the general strategy to achieve better performance is by increasing the models’ sizes as well as the amount of data they are pretrained on.

Unfortunately, training a model, especially a large one, requires a large amount of data. This becomes very costly in terms of time and compute resources. It even translates to environmental impact, as can be seen in the following graph.

And this is showing a project for a (very big) model led by a team consciously trying to reduce the environmental impact of pretraining. The footprint of running lots of trials to get the best hyperparameters would be even higher.

Imagine if each time a research team, a student organization, or a company wanted to train a model, it did so from scratch. This would lead to huge, unnecessary global costs!

This is why sharing language models is paramount: sharing the trained weights and building on top of already trained weights reduces the overall compute cost and carbon footprint of the community.

By the way, you can evaluate the carbon footprint of your models’ training through several tools. For example ML CO2 Impact or Code Carbon which is integrated in 🤗 Transformers. To learn more about this, you can read this blog post which will show you how to generate an emissions.csv file with an estimate of the footprint of your training, as well as the documentation of 🤗 Transformers addressing this topic.

Transfer Learning

Pretraining is the act of training a model from scratch: the weights are randomly initialized, and the training starts without any prior knowledge.

This pretraining is usually done on very large amounts of data. Therefore, it requires a very large corpus of data, and training can take up to several weeks.

Fine-tuning, on the other hand, is the training done after a model has been pretrained. To perform fine-tuning, you first acquire a pretrained language model, then perform additional training with a dataset specific to your task. Wait – why not simply train the model for your final use case from the start (scratch)? There are a couple of reasons:

- The pretrained model was already trained on a dataset that has some similarities with the fine-tuning dataset. The fine-tuning process is thus able to take advantage of knowledge acquired by the initial model during pretraining (for instance, with NLP problems, the pretrained model will have some kind of statistical understanding of the language you are using for your task).

- Since the pretrained model was already trained on lots of data, the fine-tuning requires way less data to get decent results.

- For the same reason, the amount of time and resources needed to get good results are much lower.

For example, one could leverage a pretrained model trained on the English language and then fine-tune it on an arXiv corpus, resulting in a science/research-based model. The fine-tuning will only require a limited amount of data: the knowledge the pretrained model has acquired is “transferred,” hence the term transfer learning.

Fine-tuning a model therefore has lower time, data, financial, and environmental costs. It is also quicker and easier to iterate over different fine-tuning schemes, as the training is less constraining than a full pretraining.

This process will also achieve better results than training from scratch (unless you have lots of data), which is why you should always try to leverage a pretrained model – one as close as possible to the task you have at hand – and fine-tune it.

General Transformer architecture

In this section, we’ll go over the general architecture of the Transformer model. Don’t worry if you don’t understand some of the concepts; there are detailed sections later covering each of the components.

The model is primarily composed of two blocks:

- Encoder (left): The encoder receives an input and builds a representation of it (its features). This means that the model is optimized to acquire understanding from the input.

- Decoder (right): The decoder uses the encoder’s representation (features) along with other inputs to generate a target sequence. This means that the model is optimized for generating outputs.

Each of these parts can be used independently, depending on the task:

- Encoder-only models: Good for tasks that require understanding of the input, such as sentence classification and named entity recognition.

- Decoder-only models: Good for generative tasks such as text generation.

- Encoder-decoder models or sequence-to-sequence models: Good for generative tasks that require an input, such as translation or summarization.

We will dive into those architectures independently in later sections.

Attention layers

A key feature of Transformer models is that they are built with special layers called attention layers. In fact, the title of the paper introducing the Transformer architecture was “Attention Is All You Need”! We will explore the details of attention layers later in the course; for now, all you need to know is that this layer will tell the model to pay specific attention to certain words in the sentence you passed it (and more or less ignore the others) when dealing with the representation of each word.

To put this into context, consider the task of translating text from English to French. Given the input “You like this course”, a translation model will need to also attend to the adjacent word “You” to get the proper translation for the word “like”, because in French the verb “like” is conjugated differently depending on the subject. The rest of the sentence, however, is not useful for the translation of that word. In the same vein, when translating “this” the model will also need to pay attention to the word “course”, because “this” translates differently depending on whether the associated noun is masculine or feminine. Again, the other words in the sentence will not matter for the translation of “course”. With more complex sentences (and more complex grammar rules), the model would need to pay special attention to words that might appear farther away in the sentence to properly translate each word.

The same concept applies to any task associated with natural language: a word by itself has a meaning, but that meaning is deeply affected by the context, which can be any other word (or words) before or after the word being studied.

Now that you have an idea of what attention layers are all about, let’s take a closer look at the Transformer architecture.

The original architecture

The Transformer architecture was originally designed for translation. During training, the encoder receives inputs (sentences) in a certain language, while the decoder receives the same sentences in the desired target language. In the encoder, the attention layers can use all the words in a sentence (since, as we just saw, the translation of a given word can be dependent on what is after as well as before it in the sentence). The decoder, however, works sequentially and can only pay attention to the words in the sentence that it has already translated (so, only the words before the word currently being generated). For example, when we have predicted the first three words of the translated target, we give them to the decoder which then uses all the inputs of the encoder to try to predict the fourth word.

To speed things up during training (when the model has access to target sentences), the decoder is fed the whole target, but it is not allowed to use future words (if it had access to the word at position 2 when trying to predict the word at position 2, the problem would not be very hard!). For instance, when trying to predict the fourth word, the attention layer will only have access to the words in positions 1 to 3.

The original Transformer architecture looked like this, with the encoder on the left and the decoder on the right:

Note that the first attention layer in a decoder block pays attention to all (past) inputs to the decoder, but the second attention layer uses the output of the encoder. It can thus access the whole input sentence to best predict the current word. This is very useful as different languages can have grammatical rules that put the words in different orders, or some context provided later in the sentence may be helpful to determine the best translation of a given word.

The attention mask can also be used in the encoder/decoder to prevent the model from paying attention to some special words – for instance, the special padding word used to make all the inputs the same length when batching together sentences.

Architectures vs. checkpoints

As we dive into Transformer models in this course, you’ll see mentions of architectures and checkpoints as well as models. These terms all have slightly different meanings:

- Architecture: This is the skeleton of the model – the definition of each layer and each operation that happens within the model.

- Checkpoints: These are the weights that will be loaded in a given architecture.

- Model: This is an umbrella term that isn’t as precise as “architecture” or “checkpoint”: it can mean both. This course will specify architecture or checkpoint when it matters to reduce ambiguity.

For example, BERT is an architecture while bert-base-cased, a set of weights trained by the Google team for the first release of BERT, is a checkpoint. However, one can say “the BERT model” and “the bert-base-cased model.”

5. How 🤗 Transformers solve tasks

In Transformers, what can they do?, you learned about natural language processing (NLP), speech and audio, computer vision tasks, and some important applications of them. This page will look closely at how models solve these tasks and explain what’s happening under the hood. There are many ways to solve a given task, some models may implement certain techniques or even approach the task from a new angle, but for Transformer models, the general idea is the same. Owing to its flexible architecture, most models are a variant of an encoder, a decoder, or an encoder-decoder structure.

[!TIP] Before diving into specific architectural variants, it’s helpful to understand that most tasks follow a similar pattern: input data is processed through a model, and the output is interpreted for a specific task. The differences lie in how the data is prepared, what model architecture variant is used, and how the output is processed.

To explain how tasks are solved, we’ll walk through what goes on inside the model to output useful predictions. We’ll cover the following models and their corresponding tasks:

- Wav2Vec2 for audio classification and automatic speech recognition (ASR)

- Vision Transformer (ViT) and ConvNeXT for image classification

- DETR for object detection

- Mask2Former for image segmentation

- GLPN for depth estimation

- BERT for NLP tasks like text classification, token classification and question answering that use an encoder

- GPT2 for NLP tasks like text generation that use a decoder

- BART for NLP tasks like summarization and translation that use an encoder-decoder

[!TIP] Before you go further, it is good to have some basic knowledge of the original Transformer architecture. Knowing how encoders, decoders, and attention work will aid you in understanding how different Transformer models work. Be sure to check out our the previous section for more information!

Transformer models for language

Language models are at the heart of modern NLP. They’re designed to understand and generate human language by learning the statistical patterns and relationships between words or tokens in text.

The Transformer was initially designed for machine translation, and since then, it has become the default architecture for solving all AI tasks. Some tasks lend themselves to the Transformer’s encoder structure, while others are better suited for the decoder. Still, other tasks make use of both the Transformer’s encoder-decoder structure.

How language models work

Language models work by being trained to predict the probability of a word given the context of surrounding words. This gives them a foundational understanding of language that can generalize to other tasks.

There are two main approaches for training a transformer model:

Masked language modeling (MLM): Used by encoder models like BERT, this approach randomly masks some tokens in the input and trains the model to predict the original tokens based on the surrounding context. This allows the model to learn bidirectional context (looking at words both before and after the masked word).

Causal language modeling (CLM): Used by decoder models like GPT, this approach predicts the next token based on all previous tokens in the sequence. The model can only use context from the left (previous tokens) to predict the next token.

Types of language models

In the Transformers library, language models generally fall into three architectural categories:

Encoder-only models (like BERT): These models use a bidirectional approach to understand context from both directions. They’re best suited for tasks that require deep understanding of text, such as classification, named entity recognition, and question answering.

Decoder-only models (like GPT, Llama): These models process text from left to right and are particularly good at text generation tasks. They can complete sentences, write essays, or even generate code based on a prompt.

Encoder-decoder models (like T5, BART): These models combine both approaches, using an encoder to understand the input and a decoder to generate output. They excel at sequence-to-sequence tasks like translation, summarization, and question answering.

As we covered in the previous section, language models are typically pretrained on large amounts of text data in a self-supervised manner (without human annotations), then fine-tuned on specific tasks. This approach, known as transfer learning, allows these models to adapt to many different NLP tasks with relatively small amounts of task-specific data.

In the following sections, we’ll explore specific model architectures and how they’re applied to various tasks across speech, vision, and text domains.

[!TIP] Understanding which part of the Transformer architecture (encoder, decoder, or both) is best suited for a particular NLP task is key to choosing the right model. Generally, tasks requiring bidirectional context use encoders, tasks generating text use decoders, and tasks converting one sequence to another use encoder-decoders.

Text generation

Text generation involves creating coherent and contextually relevant text based on a prompt or input.

GPT-2 is a decoder-only model pretrained on a large amount of text. It can generate convincing (though not always true!) text given a prompt and complete other NLP tasks like question answering despite not being explicitly trained to.

GPT-2 uses byte pair encoding (BPE) to tokenize words and generate a token embedding. Positional encodings are added to the token embeddings to indicate the position of each token in the sequence. The input embeddings are passed through multiple decoder blocks to output some final hidden state. Within each decoder block, GPT-2 uses a masked self-attention layer which means GPT-2 can’t attend to future tokens. It is only allowed to attend to tokens on the left. This is different from BERT’s [

mask] token because, in masked self-attention, an attention mask is used to set the score to0for future tokens.The output from the decoder is passed to a language modeling head, which performs a linear transformation to convert the hidden states into logits. The label is the next token in the sequence, which are created by shifting the logits to the right by one. The cross-entropy loss is calculated between the shifted logits and the labels to output the next most likely token.

GPT-2’s pretraining objective is based entirely on causal language modeling, predicting the next word in a sequence. This makes GPT-2 especially good at tasks that involve generating text.

Ready to try your hand at text generation? Check out our complete causal language modeling guide to learn how to finetune DistilGPT-2 and use it for inference!

[!TIP] For more information about text generation, check out the text generation strategies guide!

Text classification

Text classification involves assigning predefined categories to text documents, such as sentiment analysis, topic classification, or spam detection.

BERT is an encoder-only model and is the first model to effectively implement deep bidirectionality to learn richer representations of the text by attending to words on both sides.

BERT uses WordPiece tokenization to generate a token embedding of the text. To tell the difference between a single sentence and a pair of sentences, a special

[SEP]token is added to differentiate them. A special[CLS]token is added to the beginning of every sequence of text. The final output with the[CLS]token is used as the input to the classification head for classification tasks. BERT also adds a segment embedding to denote whether a token belongs to the first or second sentence in a pair of sentences.BERT is pretrained with two objectives: masked language modeling and next-sentence prediction. In masked language modeling, some percentage of the input tokens are randomly masked, and the model needs to predict these. This solves the issue of bidirectionality, where the model could cheat and see all the words and “predict” the next word. The final hidden states of the predicted mask tokens are passed to a feedforward network with a softmax over the vocabulary to predict the masked word.

The second pretraining object is next-sentence prediction. The model must predict whether sentence B follows sentence A. Half of the time sentence B is the next sentence, and the other half of the time, sentence B is a random sentence. The prediction, whether it is the next sentence or not, is passed to a feedforward network with a softmax over the two classes (

IsNextandNotNext).The input embeddings are passed through multiple encoder layers to output some final hidden states.

To use the pretrained model for text classification, add a sequence classification head on top of the base BERT model. The sequence classification head is a linear layer that accepts the final hidden states and performs a linear transformation to convert them into logits. The cross-entropy loss is calculated between the logits and target to find the most likely label.

Ready to try your hand at text classification? Check out our complete text classification guide to learn how to finetune DistilBERT and use it for inference!

Token classification

Token classification involves assigning a label to each token in a sequence, such as in named entity recognition or part-of-speech tagging.

To use BERT for token classification tasks like named entity recognition (NER), add a token classification head on top of the base BERT model. The token classification head is a linear layer that accepts the final hidden states and performs a linear transformation to convert them into logits. The cross-entropy loss is calculated between the logits and each token to find the most likely label.

Ready to try your hand at token classification? Check out our complete token classification guide to learn how to finetune DistilBERT and use it for inference!

Question answering

Question answering involves finding the answer to a question within a given context or passage.

To use BERT for question answering, add a span classification head on top of the base BERT model. This linear layer accepts the final hidden states and performs a linear transformation to compute the span start and end logits corresponding to the answer. The cross-entropy loss is calculated between the logits and the label position to find the most likely span of text corresponding to the answer.

Ready to try your hand at question answering? Check out our complete question answering guide to learn how to finetune DistilBERT and use it for inference!

[!TIP] 💡 Notice how easy it is to use BERT for different tasks once it’s been pretrained. You only need to add a specific head to the pretrained model to manipulate the hidden states into your desired output!

Summarization

Summarization involves condensing a longer text into a shorter version while preserving its key information and meaning.

Encoder-decoder models like BART and T5 are designed for the sequence-to-sequence pattern of a summarization task. We’ll explain how BART works in this section, and then you can try finetuning T5 at the end.

BART’s encoder architecture is very similar to BERT and accepts a token and positional embedding of the text. BART is pretrained by corrupting the input and then reconstructing it with the decoder. Unlike other encoders with specific corruption strategies, BART can apply any type of corruption. The text infilling corruption strategy works the best though. In text infilling, a number of text spans are replaced with a single [

mask] token. This is important because the model has to predict the masked tokens, and it teaches the model to predict the number of missing tokens. The input embeddings and masked spans are passed through the encoder to output some final hidden states, but unlike BERT, BART doesn’t add a final feedforward network at the end to predict a word.The encoder’s output is passed to the decoder, which must predict the masked tokens and any uncorrupted tokens from the encoder’s output. This gives additional context to help the decoder restore the original text. The output from the decoder is passed to a language modeling head, which performs a linear transformation to convert the hidden states into logits. The cross-entropy loss is calculated between the logits and the label, which is just the token shifted to the right.

Ready to try your hand at summarization? Check out our complete summarization guide to learn how to finetune T5 and use it for inference!

[!TIP] For more information about text generation, check out the text generation strategies guide!

Translation

Translation involves converting text from one language to another while preserving its meaning. Translation is another example of a sequence-to-sequence task, which means you can use an encoder-decoder model like BART or T5 to do it. We’ll explain how BART works in this section, and then you can try finetuning T5 at the end.

BART adapts to translation by adding a separate randomly initialized encoder to map a source language to an input that can be decoded into the target language. This new encoder’s embeddings are passed to the pretrained encoder instead of the original word embeddings. The source encoder is trained by updating the source encoder, positional embeddings, and input embeddings with the cross-entropy loss from the model output. The model parameters are frozen in this first step, and all the model parameters are trained together in the second step. BART has since been followed up by a multilingual version, mBART, intended for translation and pretrained on many different languages.

Ready to try your hand at translation? Check out our complete translation guide to learn how to finetune T5 and use it for inference!

[!TIP] As you’ve seen throughout this guide, many models follow similar patterns despite addressing different tasks. Understanding these common patterns can help you quickly grasp how new models work and how to adapt existing models to your specific needs.

Modalities beyond text

Transformers are not limited to text. They can also be applied to other modalities like speech and audio, images, and video. Of course, on this course we will focus on text, but we can briefly introduce the other modalities.

Speech and audio

Let’s start by exploring how Transformer models handle speech and audio data, which presents unique challenges compared to text or images.

Whisper is a encoder-decoder (sequence-to-sequence) transformer pretrained on 680,000 hours of labeled audio data. This amount of pretraining data enables zero-shot performance on audio tasks in English and many other languages. The decoder allows Whisper to map the encoders learned speech representations to useful outputs, such as text, without additional fine-tuning. Whisper just works out of the box.

Diagram is from Whisper paper.

This model has two main components:

An encoder processes the input audio. The raw audio is first converted into a log-Mel spectrogram. This spectrogram is then passed through a Transformer encoder network.

A decoder takes the encoded audio representation and autoregressively predicts the corresponding text tokens. It’s a standard Transformer decoder trained to predict the next text token given the previous tokens and the encoder output. Special tokens are used at the beginning of the decoder input to steer the model towards specific tasks like transcription, translation, or language identification.

Whisper was pretrained on a massive and diverse dataset of 680,000 hours of labeled audio data collected from the web. This large-scale, weakly supervised pretraining is the key to its strong zero-shot performance across many languages and tasks.

Now that Whisper is pretrained, you can use it directly for zero-shot inference or finetune it on your data for improved performance on specific tasks like automatic speech recognition or speech translation!

[!TIP] The key innovation in Whisper is its training on an unprecedented scale of diverse, weakly supervised audio data from the internet. This allows it to generalize remarkably well to different languages, accents, and tasks without task-specific finetuning.

Automatic speech recognition

To use the pretrained model for automatic speech recognition, you leverage its full encoder-decoder structure. The encoder processes the audio input, and the decoder autoregressively generates the transcript token by token. When fine-tuning, the model is typically trained using a standard sequence-to-sequence loss (like cross-entropy) to predict the correct text tokens based on the audio input.

The easiest way to use a fine-tuned model for inference is within a pipeline.

from transformers import pipeline

transcriber = pipeline(

task="automatic-speech-recognition", model="openai/whisper-base.en"

)

transcriber("https://huggingface.co/datasets/Narsil/asr_dummy/resolve/main/mlk.flac")

# Output: {'text': ' I have a dream that one day this nation will rise up and live out the true meaning of its creed.'}

Ready to try your hand at automatic speech recognition? Check out our complete automatic speech recognition guide to learn how to finetune Whisper and use it for inference!

Computer vision

Now let’s move on to computer vision tasks, which deal with understanding and interpreting visual information from images or videos.

There are two ways to approach computer vision tasks:

- Split an image into a sequence of patches and process them in parallel with a Transformer.

- Use a modern CNN, like ConvNeXT, which relies on convolutional layers but adopts modern network designs.

[!TIP] A third approach mixes Transformers with convolutions (for example, Convolutional Vision Transformer or LeViT). We won’t discuss those because they just combine the two approaches we examine here.

ViT and ConvNeXT are commonly used for image classification, but for other vision tasks like object detection, segmentation, and depth estimation, we’ll look at DETR, Mask2Former and GLPN, respectively; these models are better suited for those tasks.

Image classification

Image classification is one of the fundamental computer vision tasks. Let’s see how different model architectures approach this problem.

ViT and ConvNeXT can both be used for image classification; the main difference is that ViT uses an attention mechanism while ConvNeXT uses convolutions.

ViT replaces convolutions entirely with a pure Transformer architecture. If you’re familiar with the original Transformer, then you’re already most of the way toward understanding ViT.

The main change ViT introduced was in how images are fed to a Transformer:

An image is split into square non-overlapping patches, each of which gets turned into a vector or patch embedding. The patch embeddings are generated from a convolutional 2D layer which creates the proper input dimensions (which for a base Transformer is 768 values for each patch embedding). If you had a 224x224 pixel image, you could split it into 196 16x16 image patches. Just like how text is tokenized into words, an image is “tokenized” into a sequence of patches.

A learnable embedding - a special

[CLS]token - is added to the beginning of the patch embeddings just like BERT. The final hidden state of the[CLS]token is used as the input to the attached classification head; other outputs are ignored. This token helps the model learn how to encode a representation of the image.The last thing to add to the patch and learnable embeddings are the position embeddings because the model doesn’t know how the image patches are ordered. The position embeddings are also learnable and have the same size as the patch embeddings. Finally, all of the embeddings are passed to the Transformer encoder.

The output, specifically only the output with the

[CLS]token, is passed to a multilayer perceptron head (MLP). ViT’s pretraining objective is simply classification. Like other classification heads, the MLP head converts the output into logits over the class labels and calculates the cross-entropy loss to find the most likely class.

Ready to try your hand at image classification? Check out our complete image classification guide to learn how to fine-tune ViT and use it for inference!

[!TIP] Notice the parallel between ViT and BERT: both use a special token (

[CLS]) to capture the overall representation, both add position information to their embeddings, and both use a Transformer encoder to process the sequence of tokens/patches.

6. Transformer Architectures

In the previous sections, we introduced the general Transformer architecture and explored how these models can solve various tasks. Now, let’s take a closer look at the three main architectural variants of Transformer models and understand when to use each one. Then, we look at how those architectures are applied to different language tasks.

In this section, we’re going to dive deeper into the three main architectural variants of Transformer models and understand when to use each one.

[!TIP] Remember that most Transformer models use one of three architectures: encoder-only, decoder-only, or encoder-decoder (sequence-to-sequence). Understanding these differences will help you choose the right model for your specific task.

Encoder models

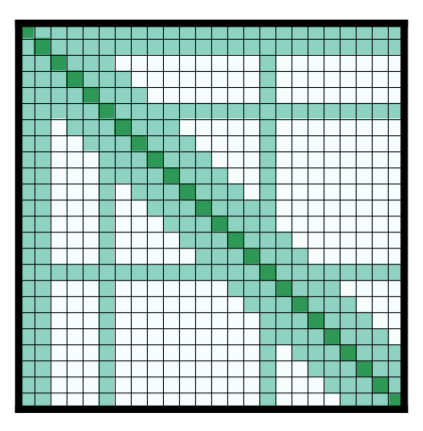

Encoder models use only the encoder of a Transformer model. At each stage, the attention layers can access all the words in the initial sentence. These models are often characterized as having “bi-directional” attention, and are often called auto-encoding models.

The pretraining of these models usually revolves around somehow corrupting a given sentence (for instance, by masking random words in it) and tasking the model with finding or reconstructing the initial sentence.

Encoder models are best suited for tasks requiring an understanding of the full sentence, such as sentence classification, named entity recognition (and more generally word classification), and extractive question answering.

[!TIP] As we saw in How 🤗 Transformers solve tasks, encoder models like BERT excel at understanding text because they can look at the entire context in both directions. This makes them perfect for tasks where comprehension of the whole input is important.

Representatives of this family of models include:

Decoder models

Decoder models use only the decoder of a Transformer model. At each stage, for a given word the attention layers can only access the words positioned before it in the sentence. These models are often called auto-regressive models.

The pretraining of decoder models usually revolves around predicting the next word in the sentence.

These models are best suited for tasks involving text generation.

[!TIP] Decoder models like GPT are designed to generate text by predicting one token at a time. As we explored in How 🤗 Transformers solve tasks, they can only see previous tokens, which makes them excellent for creative text generation but less ideal for tasks requiring bidirectional understanding.

Representatives of this family of models include:

Modern Large Language Models (LLMs)

Most modern Large Language Models (LLMs) use the decoder-only architecture. These models have grown dramatically in size and capabilities over the past few years, with some of the largest models containing hundreds of billions of parameters.

Modern LLMs are typically trained in two phases:

- Pretraining: The model learns to predict the next token on vast amounts of text data

- Instruction tuning: The model is fine-tuned to follow instructions and generate helpful responses

This approach has led to models that can understand and generate human-like text across a wide range of topics and tasks.

Key capabilities of modern LLMs

Modern decoder-based LLMs have demonstrated impressive capabilities:

| Capability | Description | Example |

|---|---|---|

| Text generation | Creating coherent and contextually relevant text | Writing essays, stories, or emails |

| Summarization | Condensing long documents into shorter versions | Creating executive summaries of reports |

| Translation | Converting text between languages | Translating English to Spanish |

| Question answering | Providing answers to factual questions | “What is the capital of France?” |

| Code generation | Writing or completing code snippets | Creating a function based on a description |

| Reasoning | Working through problems step by step | Solving math problems or logical puzzles |

| Few-shot learning | Learning from a few examples in the prompt | Classifying text after seeing just 2-3 examples |

You can experiment with decoder-based LLMs directly in your browser via model repo pages on the Hub. Here’s an example with the classic GPT-2 (OpenAI’s finest open source model!):

View GPT-2 model on Hugging Face

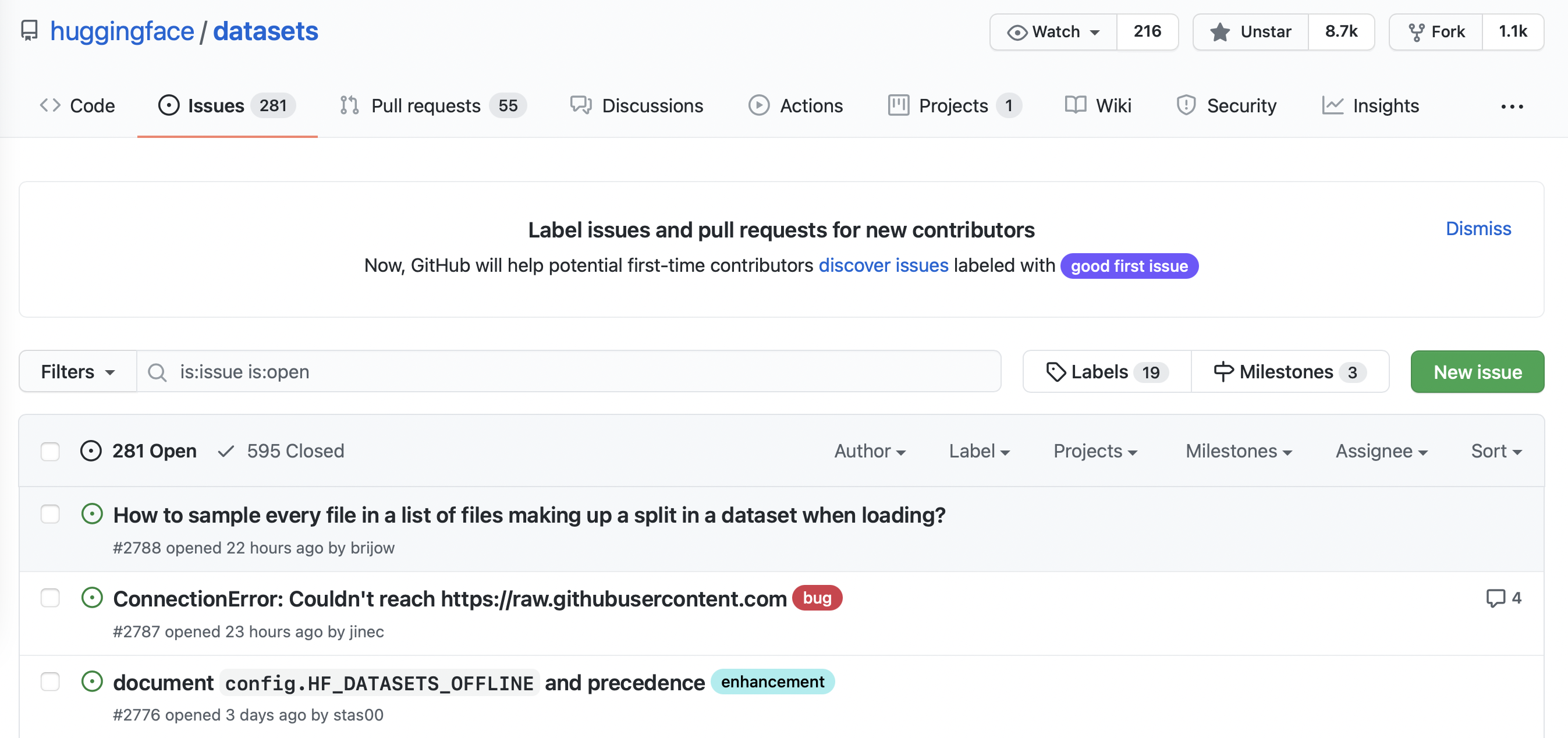

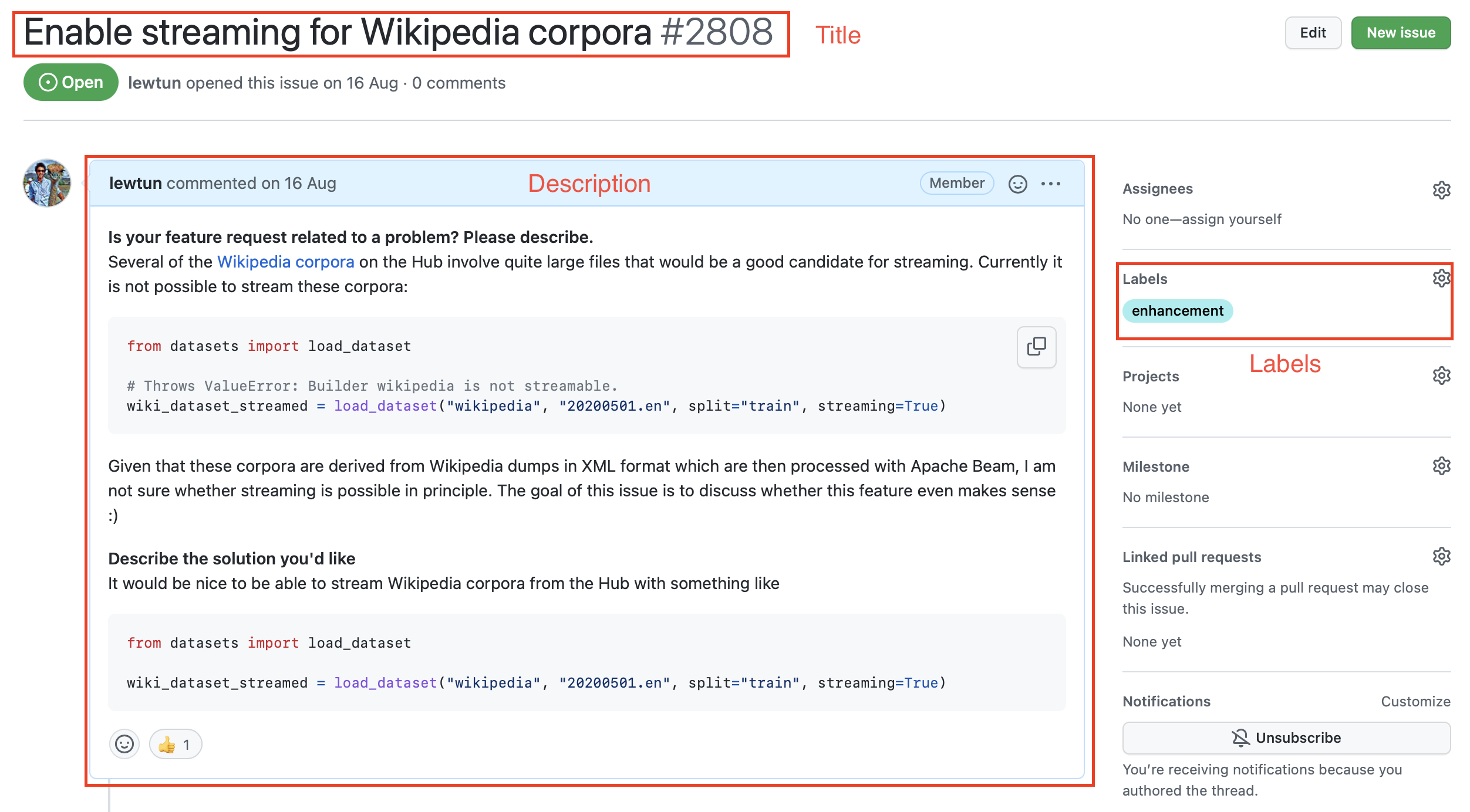

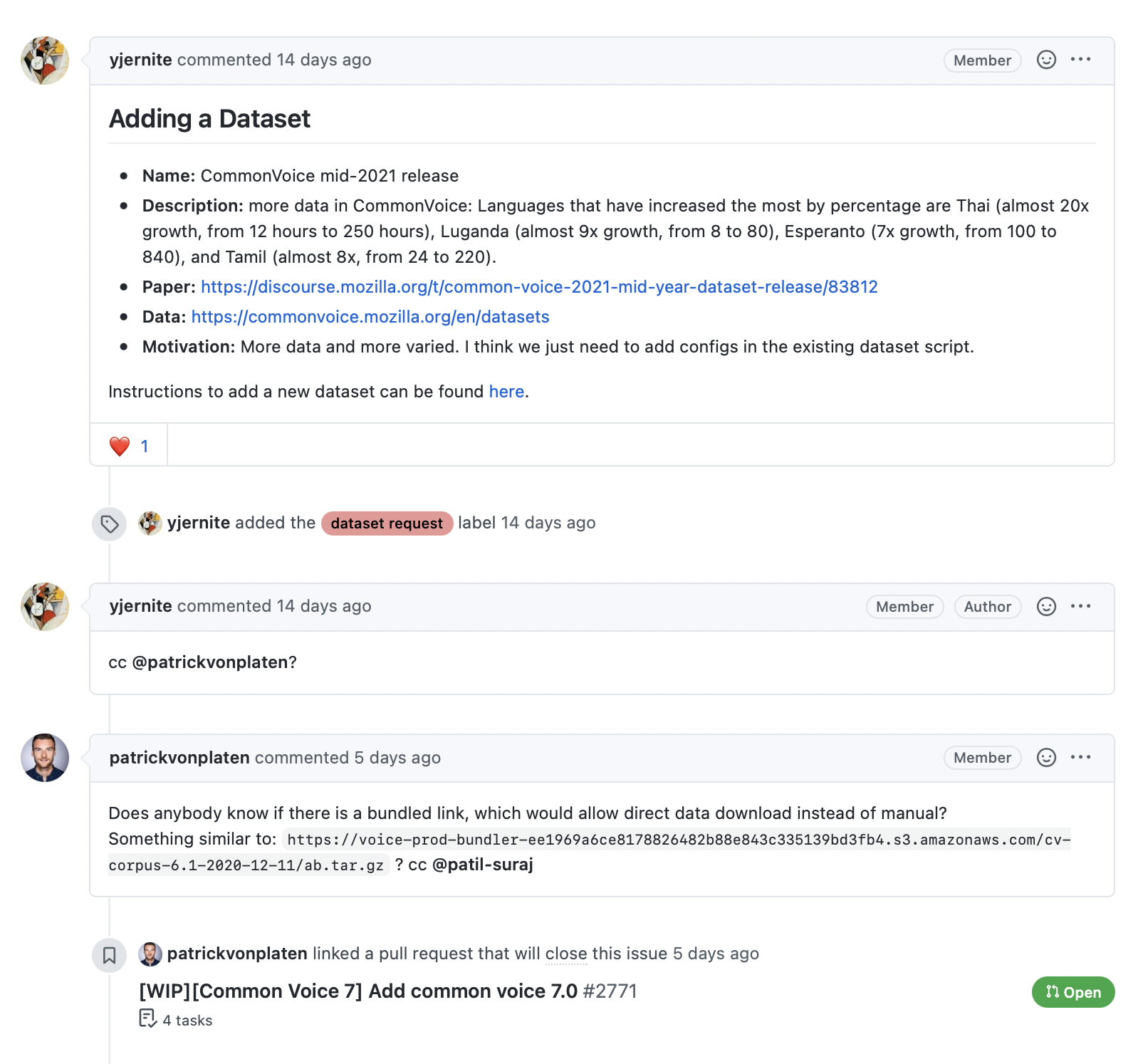

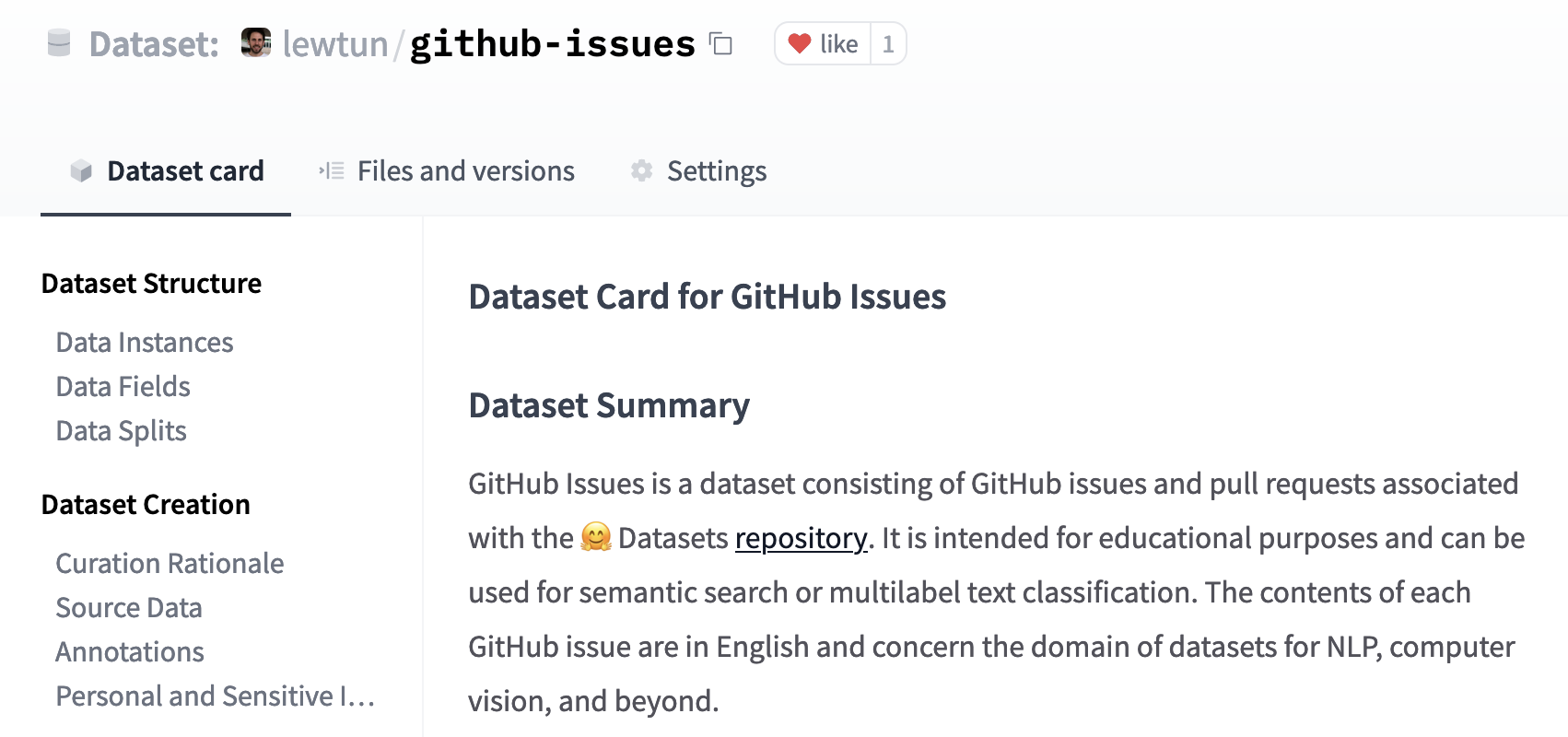

Sequence-to-sequence models